We may not have the course you’re looking for. If you enquire or give us a call on 01344203999 and speak to our training experts, we may still be able to help with your training requirements.

We ensure quality, budget-alignment, and timely delivery by our expert instructors.

In an age driven by data, where information is abundant and valuable insights lie within the vast sea of numbers and statistics, the role of Data Science has become increasingly significant. It's the science that extracts meaning from this data, helping organisations make informed decisions, predict future trends, and optimise their operations. However, taking the journey from raw data to valuable insights is a challenging endeavour. It involves a complex and structured process known as the Data Science Pipeline.

A Data Science Pipeline is a systematic process used to collect, clean, transform, analyse, and visualise data for various purposes. This blog will delve into the Data Science Pipeline, exploring its various stages and the best practices that make it a powerful tool for Data Scientists and organisations.

Table of contents

1) What is Data Science Pipeline?

2) Importance of Data Science Pipeline

3) Stages in Data Science Pipeline

4) Characteristics of Data Science Pipeline

5) Best practices of Data Science Pipeline

6) Conclusion

What is Data Science Pipeline?

A Data Science Pipeline is a structured and automated workflow that enables the collection, processing, analysis, and deployment of data-driven models in a systematic and efficient manner. It involves a series of interconnected processes designed to turn raw data into valuable insights and predictions, making it a fundamental component of Data Science and Machine Learning projects. Data Science Pipelines ensure data quality, reproducibility, and scalability, thereby facilitating the entire Data Analysis and model development process.

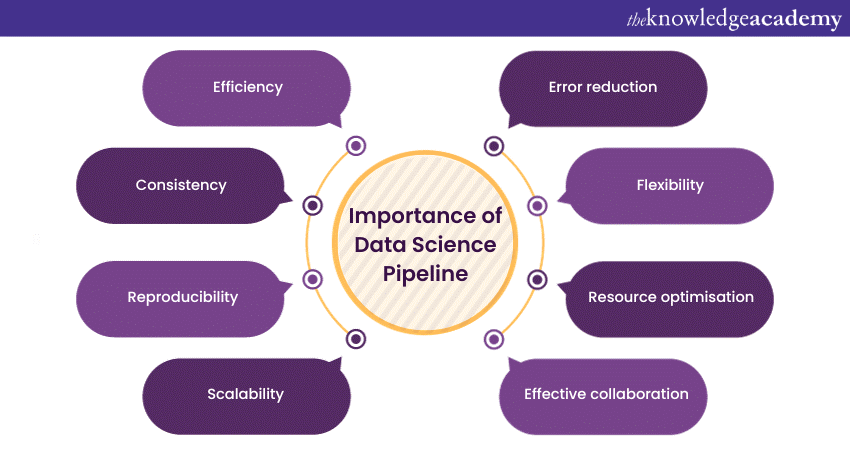

Importance of Data Science Pipeline

The importance of a Data Science Pipeline can be summarised in several key points:

a) Efficiency: Data Science Pipelines automate and streamline the Data Analysis process. This significantly reduces the time and effort required to move from data collection to model deployment. Without a pipeline, these tasks are often performed manually, leading to inefficiency and errors.

b) Consistency: Data Science pipelines enforce a consistent methodology for data processing and Data Pipeline model development. This consistency is crucial for obtaining reliable results and for reproducing experiments, which is essential in the scientific method.

c) Reproducibility: Data Science Pipelines enable reproducibility. This means that other Data Scientists or team members can replicate your work precisely by running the same pipeline with the same data. It's a fundamental principle in scientific research.

d) Scalability: As data volumes increase, manual data processing and analysis become impractical. Pipelines are designed to handle vast datasets and can be scaled easily to accommodate increased data complexity.

e) Error Reduction: Automation reduces the chances of human error. This is critical in data science, where even minor errors in data processing or model implementation can lead to significant inaccuracies.

f) Flexibility: Pipelines can be adapted to changing requirements or data sources. This means that as new data becomes available or as business needs evolve, the pipeline can be updated without completely overhauling the Data Analysis process.

g) Resource Optimisation: Data Science Pipelines can be optimised to make efficient use of computational resources, which is particularly important for tasks such as:

a) Model training.

b) Hyperparameter tuning.

c) Data transformation.

h) Effective Collaboration: When multiple Data Scientists or team members are working on a project, a well-defined pipeline allows for effective collaboration. Team members can understand and work with each other's code, making the development process smoother.

Ready to unlock the potential of data? Join our Data Mining Training and dive into the world of insights and opportunities!

Stages in Data Science Pipeline

A Data Science Pipeline typically consists of several key stages, each of which plays a big role in the data analysis and model development process. Following a Data Science Process Guide ensures that these stages are carried out systematically and efficiently, helping to collect, process, analyse, and deploy data. The specific stages may vary based on the project and the Data Pipeline Architecture, but here are the most common stages in a typical Data Science Pipeline.

a) Data Collection: Using Kaggle in Data Science, data collection is the initial phase of the Data Science Pipeline. It involves gathering data from various sources, such as databases, APIs, or external datasets, including those available on Kaggle. The collected data is then cleaned, checked for quality, and stored in an appropriate format for further processing.

b) Data Preprocessing:In this stage, the focus is on preparing the data for analysis. This includes:

a) Handling missing data.

b) Normalising and scaling data.

c) Performing transformations like one-hot encoding or feature engineering.

This makes the data suitable for Machine Learning models.

c) Data Exploration and Analysis: Agile Testers create tests on the fly while exploring the product. This approach is closely aligned with the principles of Exploratory Data Analysis, where understanding evolves during the process itself. Just as data analysts iteratively assess and refine their findings, testers continuously adapt their test cases based on observations. understanding the characteristics of the dataset. Through statistical analysis and data visualisation, Data Scientists gain insights into data distributions, correlations, and patterns that can guide further analysis.

d) Feature Engineering: Feature engineering involves selecting the most relevant features for analysis and creating new ones to enhance model performance. This step aims to optimise the dataset for the specific Machine Learning algorithms to be used.

e) Model Building: This stage is where the chosen Machine Learning algorithms are applied to the prepared dataset. The data is typically divided into training and testing sets. The model is trained around the training data and evaluated on the testing data to assess its performance.

f) Model Optimisation: After building the initial model, optimisation efforts come into play. This can include tuning hyperparameters, applying cross-validation techniques, and exploring ensemble methods to improve the model's accuracy and robustness.

g) Model Deployment: Model deployment is the process of preparing the model for use in real-world applications or systems. This involves fusing the model into the target environment and setting up monitoring and maintenance procedures to ensure its ongoing performance.

h) Automation and Orchestration: Automation simplifies repetitive tasks within the pipeline, while orchestration ensures the smooth flow of data and processes. These elements help in streamlining the pipeline's execution.

i) Data Science Tools and Frameworks: Selecting the right tools and frameworks is crucial to execute the pipeline efficiently. Data scientists choose tools and libraries that best suit their project's requirements for various stages of the pipeline,

j) Best practices and Documentation: Maintaining best practices for code quality, documentation, and version control is essential. Proper documentation of the pipeline's steps, assumptions, and dependencies ensures clarity and reproducibility.

k) Testing and Validation: Rigorous testing is conducted to ensure the pipeline's reliability and correctness. Validation of the pipeline's outputs against expected results is a critical quality assurance step.

l) Scalability and Performance: As data volumes or computational demands increase, the pipeline may need to be optimised for scalability and performance. Continuous monitoring is essential to ensure efficient resource usage.

Explore our Advanced Data Science Certification and unlock new opportunities in the world of Data Analytics and insights!

Characteristics of Data Science Pipeline

Let’s understand the characteristics of the Data Science Pipeline:

a) Structured Workflow: A Data Science Pipeline follows a structured, step-by-step workflow that guides the Data Analysis and model development process. This structure helps ensure that all necessary tasks are completed in a logical sequence.

b) Automation: Automation is a key feature of Data Science Pipelines. It automates repetitive and time-consuming tasks, like:

a) Data Preprocessing in Machine Learning.

b) Model training.

c) Result reporting.

This leads to increased efficiency and reduced human error.

c) Reproducibility: Reproducibility is a fundamental characteristic of Data Science pipelines. Every step is documented and automated, allowing others to replicate the entire process and ensuring that results can be independently verified.

d) Modularity: Pipelines are often designed with modularity in mind. Each stage or task is a separate module, making it easier to replace or update individual components as needed without affecting the entire pipeline.

e) Scalability: Data Science Pipelines can be designed to handle both small and large datasets. They are scalable, allowing for efficient data processing as data volumes increase.

f) Flexibility: Pipelines are adaptable to changing data sources and requirements. They can be updated to accommodate new data sources, different data formats, or evolving business needs.

g) Consistency: Pipelines enforce consistency in data processing and model development. This is essential for obtaining reliable results and ensuring that Data Analysis is carried out uniformly over time.

Join our comprehensive Data Science Training and equip yourself with the skills to make meaningful insights from data!

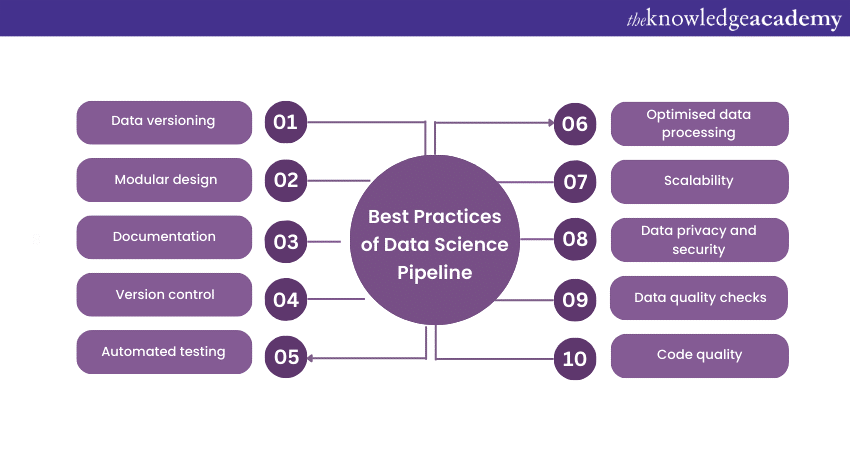

Best Practices of Data Science Pipeline

Best practices for developing a Data Science Pipeline are essential for ensuring that Data Analysis and model development processes are efficient, reliable, and maintainable. Here are some key best practices:

a) Data Versioning: Implement a data versioning system to track changes and updates to datasets. This ensures data consistency and allows for reproducibility of results.

b) Modular Design: Create a modular pipeline with well-defined stages. This makes it easier to maintain and update individual components, improving flexibility and scalability.

c) Documentation: Thoroughly document each stage of the pipeline, including:

a) Code.

b) Data transformations.

c) Model configurations.

Proper documentation enhances transparency and reproducibility.

d) Version Control: Use version control systems like Git to manage code, scripts, and configurations. Version control helps track changes, collaborate effectively, and revert to previous states when necessary.

e) Automated Testing: Develop and implement automated testing procedures to verify the correctness of pipeline components. This mitigates the risk of errors and ensures consistent results.

f) Code Quality: Adhere to coding standards and best practices to maintain clean, well-organised, and readable code. Consistent coding practices make collaboration and maintenance easier.

g) Data Quality Checks: Incorporate data quality checks into the pipeline to identify and handle missing or erroneous data, ensuring the quality and integrity of the dataset.

h) Data Privacy and Security: Implement robust security measures to protect sensitive data. Additionally, ensure compliance with data privacy regulations and best practices for data handling.

i) Scalability: Design the pipeline to handle growing datasets and increased computational demands. Consider parallel processing and distributed computing as needed.

j) Optimised Data Processing: Focus on optimising data processing steps to reduce computational overhead and speed up the pipeline. Utilise efficient algorithms and data structures.

Conclusion

In conclusion, a well-structured and efficiently managed Data Science Pipeline, or a Big Data Pipeline for handling vast volumes of data, is a fundamental component of successful Data Analysis and model development projects. By adhering to best practices such as modular design, documentation, version control, and automated testing, Data Scientists can ensure that their pipelines are robust, reproducible, and maintainable.

Register for our Probability and Statistics for Data Science Training to unlock the key to making data-driven decisions with confidence!

Frequently Asked Questions

How are Machine Learning Models Integrated Into the Data Science Pipeline?

Machine Learning models are integrated into the Data Science pipeline through several key stages:

a) Data collection

b) Data preparation

c) Model selection

d) Training

e) Evaluation

f) Hyperparameter tuning

g) Deployment

h) Monitoring and maintenance

What Tools and Frameworks are Commonly Used to Build Data Science Pipelines?

Here are some popular tools used to build Data Science Pipelines:

a) Apache Airflow

b) Apache Kafka

c) AWS Glue

d) Google Cloud Dataflow

e) Microsoft Azure Data Factory

f) Informatica PowerCenter

g) Talend Data Integration

h) Matillion

i) StreamSets Data Collector

What are the Other Resources and Offers Provided by The Knowledge Academy?

The Knowledge Academy takes global learning to new heights, offering over 3,000+ online courses across 490+ locations in 190+ countries. This expansive reach ensures accessibility and convenience for learners worldwide.

Alongside our diverse Online Course Catalogue, encompassing 17 major categories, we go the extra mile by providing a plethora of free educational Online Resources like Blogs, eBooks, Interview Questions and Videos. Tailoring learning experiences further, professionals can unlock greater value through a wide range of special discounts, seasonal deals, and Exclusive Offers.

What is The Knowledge Pass, and How Does it Work?

The Knowledge Academy’s Knowledge Pass, a prepaid voucher, adds another layer of flexibility, allowing course bookings over a 12-month period. Join us on a journey where education knows no bounds.

What are the Related Courses and Blogs Provided by The Knowledge Academy?

The Knowledge Academy offers various Data Science Courses, including the Python Data Science Course and the Predictive Analytics Course. These courses cater to different skill levels, providing comprehensive insights into What is Data Processing.

Our Data, Analytics & AI Blogs cover a range of topics related to Data Science, offering valuable resources, best practices, and industry insights. Whether you are a beginner or looking to advance your Data Science skills, The Knowledge Academy's diverse courses and informative blogs have got you covered.

Lily Turner is a data science professional with over 10 years of experience in artificial intelligence, machine learning, and big data analytics. Her work bridges academic research and industry innovation, with a focus on solving real-world problems using data-driven approaches. Lily’s content empowers aspiring data scientists to build practical, scalable models using the latest tools and techniques.

View Detail

Top Rated Course

Top Rated Course

If you wish to make any changes to your course, please

If you wish to make any changes to your course, please