We may not have the course you’re looking for. If you enquire or give us a call on 01344203999 and speak to our training experts, we may still be able to help with your training requirements.

We ensure quality, budget-alignment, and timely delivery by our expert instructors.

Jenkins is an essential part of DevOps, a frequently used name in software development. But have you ever wondered, What is Jenkins exactly? It is an extremely powerful automation tool, a server developed in Java, that has a vast and robust community. It has grown over many years since its creation, alongside its community.

According to Statista, over 43% of developers use Continuous Integration tools like Jenkins in software development. Read this blog further to learn about the free, open-source automation server Jenkins. Check out this blog to understand more about Jenkins, its functions, and its features. Read more!

Table of Contents

1) So, what is Jenkins anyway?

a) History of Jenkins

b) How Jenkins affected Continuous Integration

2) Architecture of Jenkins

3) Why should you choose Jenkins?

4) Conclusion

So, what is Jenkins anyway?

Jenkins is an extremely popular tool for software testing automation processes. It has simplified the Continuous Integration (CI) process and made the Software Development Life Cycle (SDLC) much more efficient. It is highly versatile, capable of running on multiple platforms such as Windows, macOS, Linux, and Unix, using web servers like Apache Tomcat.

Jenkins has frequently used the logo of a loyal servant, a butler, as its marketing image to denote its relationship with developers. It has behaved in a similar fashion, taking care of all the redundant tasks of the SDLC, proving its merit with time. Let's understand how this testing automation tool came to be.

History of Jenkins

The history of Jenkins begins with Kohsuke Kawaguchi, a developer at Sun Microsystems. Kohsuke would frequently receive backlash from his co-workers due to his code breaking the entire build. As a developer, Kohsuke was expected to write a block of code and integrate it into the base code. This meant that if a new block of code was incorrect, its integration would ruin the entire base code when it was run.

Integration can be likened to adding a small component to a large machine. If a small component fails to work properly, it can affect the entire machine. In this scenario, the person responsible for the creation of the component was Kawaguchi.

Kawaguchi decided to change this by developing Jenkins in 2004 which was known as the Hudson project at the time. This project allowed him to test his blocks of code before actually adding them to the base code of their project. By using Jenkins Test Automation, he could ensure each component was properly tested. This meant he could fix a component with an error before it affected the working of the entire system.

Eventually, Kohsuke's team learned about Hudson, and they also wanted to simplify their work. Kohsuke was more than happy to comply, and he open-sourced his work to the entire world. Anyone with access to the Jenkins tutorial could use it, which benefited them greatly until things started to change in 2010. Sun Microsystems, which was the rightful owner of Hudson, came under the ownership of Oracle in the following year.

The change in management eventually led to a dispute between Hudson’s open-source community and Oracle about the infrastructure. This led to a fork between projects, with a large majority of Hudson’s community voting to rename Hudson’s project as Jenkins. This led to the creation of the Jenkins project in 2011, as we know it now.

As a result of the fork in the original Hudson project, both Hudson and Jenkins continued to operate independently of each other. Hudson got 32 projects over time and was eventually donated to the Eclipse Foundation. Due to a lack of projects, Hudson eventually stopped getting maintained, resulting in it no longer being in development.

Jenkins, along with a Jenkins Alternative, eventually came under the ownership of an organization under the Linux Foundation, known as the CD Foundation With its rising popularity, it has built a large community for itself due to its open-source nature. Since then, it has become one of the most frequently used tools in DevOps.

How Jenkins affected Continuous Integration

Jenkins significantly simplified the software development process with its use. To understand what Jenkins is and why we use it, let's first understand the integration process before its creation. Before the advent of Continuous Integration (CI), there was a process known as Nightly Builds.

Nightly Builds got their name from an automation tool that would pull all the blocks of code added to a shared repository at night. This automation would then build the code based on all the changes added throughout the day. However, since the automation worked on a collective of all added codes, the resulting code build in the morning would be large in nature.

The resulting large code from Nightly Builds would often suffer from numerous errors. Fixing these errors would require a lot of effort from the developers, as locating a particular error in a large wall of code was time-consuming. This changed when the concept of Continuous Integration was adopted in development firms.

Jenkins played a significant role in the adoption of CI, as it allowed developers to test their code in real-time. This enabled them to identify and fix errors before adding the code to a shared repository or the actual base code. This was a crucial step in the simplifying the CI process, as it helped development teams pinpoint the mistake and identify the root causes of errors.

This change in the development process had a positive impact on the speed of deployments at multiple levels. Deployments were no longer dependent on individual limits, and the automated nature of Jenkins allowed the development process to proceed smoothly even in the absence of an individual. Additionally, it eliminated the need for frequent rollouts after short intervals.

Architecture of Jenkins

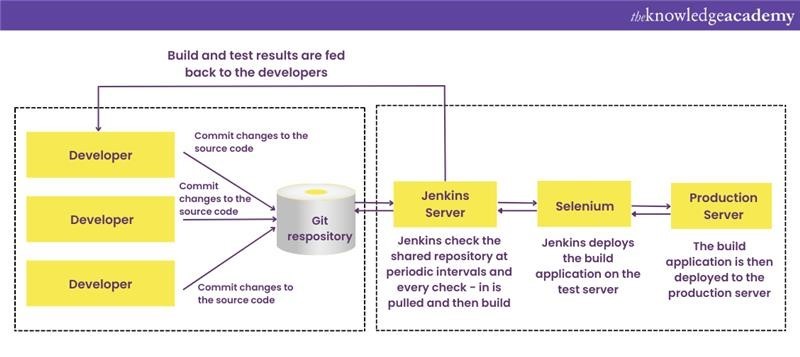

Jenkins follows a specific order in the software development pipeline to ensure successful operation. This structure shapes its workflow and architecture. When a developer creates a small block of code to add to the base code of the software, they submit it to a shared repository such as GitHub. The CI system then checks the repository for any changes.

Once the CI system detects changes in the repository, such as newly submitted code, the Build Server proceeds to build it as an executable file. If the executable file runs successfully, the deployment team is notified to proceed further with the code. Similarly, if a build fails, developers are notified so they can work on it immediately.

Jenkins takes the additional step of testing the block of code on a test server, providing feedback to the development team accordingly. Any error-free code within the CI server is then provided to the production server for deployment. From this point onwards, a Master server is responsible for distributing the Slave Server.

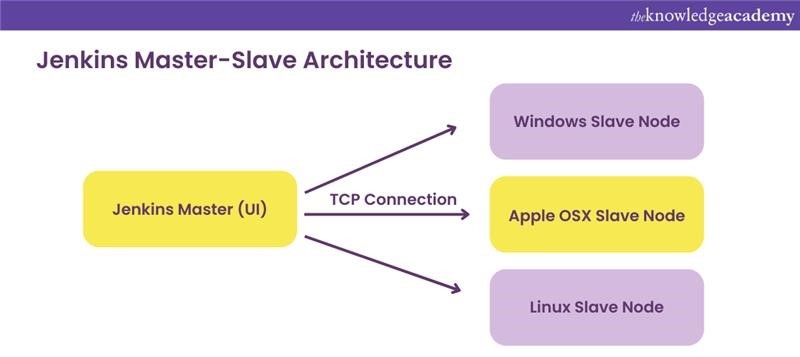

What is Master-Slave Architecture in Jenkins

The term Master and Slave architecture was derived from multiple different servers working for a single server. The server that is responsible for distributing the workload across different servers is referred to as a Master Server. The servers that focus on the distributed workload handed to them are referred to as Slave Servers.

This Master-Slave Architecture of Jenkins allows for more efficient and faster deployment methods by dividing the workload. Additionally, the presence of multiple Slave Servers in Jenkins Architecture enables the creation of different versions of codes, based on different Operating Systems.

Level up your career with Certified Agile DevOps Professional (CADOP) training. Sign up today and elevate your DevOps expertise!

Why should you choose Jenkins?

Jenkins is one of the most frequently used free automation tools in the software development process. It is open source, plugin compatible, and extremely efficient, which makes it very popular in DevOps. There are many benefits of using it in the SDLC lifecycle. If you're preparing for a role that involves Jenkins, you can also check out our Jenkins Interview Questions for additional insights and preparation. Let's go through some of the reasons and benefits of using it.

1) Ease of installation: Programmed in Java, an extremely platform-independent language, Jenkins can run on nearly all platforms, which is an essential aspect of the working of Jenkins. This allows you to Install it on systems like MacOS, Windows, as well as Linux. It also has a built-in help function, which allows you to set it up and configure it with relative ease.

2) Distributed Architecture: Jenkins is able to use the Master Slave server to distribute its work. This allows it to work on multiple different platforms at the same time. Additionally, this also reduces the time taken in deployment, making it extremely efficient.

3) Plugin and Community Support: Jenkins is one of the most plugin-compatible software testing tools, which is able to work with every other DevOps tool out there. The scope and limitations of Jenkins’ usage can be increased simply by installing the right plugin. Its open-source nature allows the community to improve upon its foundation, allowing both the community and the tool to grow.

4) Higher Code Coverage: The term ‘Code Coverage’ is used in software development to refer to the efficiency of a code, define by its execution. Jenkins increases the code coverage of a development process, which leads to better metrics. By maintaining a higher standard of transparency in development, a team is able to take better steps to meet its goals.

5) Less Broken Code: Since Jenkins tests a code on all instances, it allows the base code to face fewer issues. All new blocks of code within the shared repository are only built once the correctness of the code is verified. This removes the need to identify, locate, and fix code within the large base code. It removes the potential for redundancy and failure within the code.

Learn about DevOps security practices with the Certified DevOps Security Professional (CDSOP) course!

Conclusion

Jenkins is one of the most important tools that have revolutionised Continuous Integration and Continuous Development. Its powerful automation server allows you to test your code blocks on every instance of its creation. With this blog, you’ve hopefully gained a better grasp of What is Jenkins, how it was made, and its usage. Thank you for reading.

Learn how to use Jenkins with our Certified DevOps Professional (CDOP) course!

Frequently Asked Questions

Lily Turner is a data science professional with over 10 years of experience in artificial intelligence, machine learning, and big data analytics. Her work bridges academic research and industry innovation, with a focus on solving real-world problems using data-driven approaches. Lily’s content empowers aspiring data scientists to build practical, scalable models using the latest tools and techniques.

View Detail

Upcoming Programming & DevOps Resources Batches & Dates

Date

Certified DevOps Professional (CDOP)

Certified DevOps Professional (CDOP)

Thu 11th Jun 2026

Thu 1st Oct 2026

Thu 17th Dec 2026

Top Rated Course

Top Rated Course

If you wish to make any changes to your course, please

If you wish to make any changes to your course, please