We may not have the course you’re looking for. If you enquire or give us a call on 01344203999 and speak to our training experts, we may still be able to help with your training requirements.

We ensure quality, budget-alignment, and timely delivery by our expert instructors.

It's no news that Machine Learning models are moving from research to real-world impact at dizzying pace. This also means the demand for individuals who can bridge the gap between Data Science and operations is higher than ever. So, if you are confident about navigating this cutting-edge maze of Cloud platforms, pipelines and deployment strategies, we've got your future streamlined with a list of MLOps Interview Questions and answers.

From deployment strategies to handling data drift, these MLOps Interview Questions are designed to sharpen your knowledge and elevate your chances of landing that dream role. So, wait no more, dive into this blog, hone your professional edge and keep the heartbeat of modern Machine Learning (ML) success going!

Table of Contents

1) Top 40 MLOps Interview Questions With Answers

a) What is MLOps and how does it differ from traditional DevOps?

b) Explain the concept of model versioning and why it is important in MLOps.

c) Describe the role of CI/CD in MLOps

d) What are the key components of an MLOps pipeline?

e) How do you handle data drift in a production Machine Learning model?

f) Explain the importance of monitoring Machine Learning models in production

g) What tools and frameworks have you used for MLOps?

h) Describe a time when you faced challenges in deploying a Machine Learning model. How did you overcome them?

i) How do you manage feature stores and ensure consistent data across training and inference in MLOps?

j) What is the purpose of feature engineering in the MLOps process?

2) Tips for Preparing for an MLOps Interview

3) Conclusion

Top 40 MLOps Interview Questions With Answers

Machine Learning Operations (MLOps) focuses on automating and standardising the processes of building, deploying, and maintaining Machine Learning models in production. If you’re planning a dream job in this role, the following list of the top MLOps Interview Questions and answers will help you prep like a pro. Let's dive in:

What is MLOps and How Does it Differ from Traditional DevOps?

This will check if you understand the fundamentals of MLOps.

Sample Answer:

"MLOps is like DevOps for Machine Learning, but with added complexity. While DevOps focuses on software delivery, MLOps handles ML-specific challenges like model training, data versioning and monitoring model performance. It integrates Data Science workflows into production pipelines for continuous improvement and scalability."

Explain the concept of model versioning and why it is important in MLOps?

This question will assess your knowledge of tracking models over time.

Sample Answer:

“Model versioning tracks changes in model parameters, architecture, and training data. It’s crucial because it ensures reproducibility, enables rollback to previous versions, and allows teams to compare performance over time. Without it, managing models in production can become messy and error-prone.”

Describe the role of CI/CD in MLOps

This question will help spotlight how well you understand automation in the ML lifecycle.

Sample Answer:

“CI/CD in MLOps automates the process of testing, validating, and deploying models. It ensures that updates to data, code, or models flow smoothly into production with minimal manual intervention. This reduces deployment time, improves reliability, and supports continuous improvement of ML systems.”

What are the key components of an MLOps pipeline?

This question will help gauge your knowledge of end-to-end MLOps workflow.

Sample Answer:

“An MLOps pipeline usually includes data ingestion, preprocessing, model training, evaluation, deployment, and monitoring. Supporting components like version control, CI/CD, and infrastructure automation are essential. Together, they ensure models are developed, deployed, and maintained efficiently with high reliability in production environments.”

How do you handle data drift in a production Machine Learning model?

This will test your problem-solving skills in maintaining model accuracy over time.

Sample Answer:

“I detect data drift by monitoring input data distributions and model performance metrics. If drift is detected, I retrain the model using updated data, adjust preprocessing, or refine features. Automating detection and retraining helps maintain accuracy without constant manual oversight.”

Think Excel is just for formulas? Think again. Learn the power for AI and ML in our expert-led AI And ML With Excel Training - Sign up now!

Explain the importance of monitoring Machine Learning models in production?

This question is designed to check your awareness of post-deployment responsibilities.

Sample Answer:

“Monitoring ensures models stay accurate, fair, and reliable over time. In production, data patterns and user behaviour change. Without monitoring, performance may degrade unnoticed. Tracking metrics like accuracy, latency, and bias helps trigger timely retraining or adjustments to keep results trustworthy.”

What tools and frameworks have you used for MLOps?

This will help the interviewer learn about your hands-on experience and tool familiarity.

Sample Answer:

“I’ve used MLflow for experiment tracking, Kubeflow and Airflow for orchestration, and Docker for containerisation. For deployment, I’ve worked with Kubernetes and Cloud services like AWS SageMaker and Azure ML. Each tool addresses specific stages in the MLOps lifecycle effectively.”

Describe a time when you faced challenges in deploying a Machine Learning model. How did you overcome them?

This will help your problem-solving skills, adaptability and ability to troubleshoot and resolve issues during ML model deployment.

Sample Answer:

“Once, a model deployment failed due to incompatible library versions between development and production. I containerised the environment with Docker, ensuring consistency. It taught me the importance of reproducible environments and tighter integration between Data Science and Engineering teams early on.”

How do you manage feature stores and ensure consistent data across training and inference in MLOps?

The intent of this question is to assess whether you understand the use of feature stores for consistent feature Engineering and data handling in an MLOps workflow.

Sample Answer:

"In MLOps, I manage feature stores by defining features once and reusing them for both training and inference, which avoids training–serving skew. I ensure data consistency with schema checks, validation tools like Great Expectations, and monitoring for drift. I also version features and pipelines—often using tools like Feast or MLflow—for reproducibility and collaboration."

What is the purpose of feature Engineering in the MLOps process?

This question will check if you know how feature Engineering fits into ML workflows.

Sample Answer:

“Feature engineering transforms raw data into meaningful inputs that improve model performance. In MLOps, it’s important to automate and version these transformations so they remain consistent between training and production, reducing discrepancies and improving model reliability after deployment.”

What is the difference between MLOps, ModelOps, and AIOps?

This question is intended to check your understanding of related but distinct operational concepts.

Sample Answer:

“MLOps focuses on the end-to-end lifecycle of ML models, ModelOps handles deployment and governance for all AI models, and AIOps applies AI to improve IT operations. They overlap but differ in scope, stakeholders, and operational goals.”

How do you ensure ML pipelines are compliant with regulatory and ethical requirements?

With this question, the interviewer wants to evaluate your understanding of regulatory compliance and AI ethics in the context of MLOps.

Sample Answer:

"To ensure ML pipelines are compliant, I start by aligning data handling with regulations like GDPR or HIPAA. I also embed data validation and lineage tracking in the pipeline, so we know where features come from and can audit them. On the ethical side, I run bias and fairness checks to avoid unintended discrimination and document model decisions for transparency."

How does monitoring differ from logging?

This will assess your understanding of the distinct roles of monitoring and logging in tracking model performance.

Sample Answer:

“Logging records system events for historical reference, while monitoring actively tracks metrics and alerts on unusual patterns. In MLOps, logs help investigate issues after they happen, whereas monitoring aims to detect and prevent problems before they impact performance.”

Step into the mind of a machine. Sign up for our comprehensive Deep Learning Course and start building smarter systems now!

What testing should be done before deploying an ML model into production?

This question will help assess your range of knowledge regarding Quality Assurance practices.

Sample Answer:

“I run unit tests for code, validation tests for data integrity, performance benchmarks, and bias checks. I also test model behaviour under edge cases. This ensures the model is robust, fair, and production-ready before release.”

What is the A/B split approach of model evaluation?

This question will check your knowledge of model evaluation strategies.

Sample Answer:

“A/B testing splits users into groups where each group sees a different model version. By comparing performance metrics like accuracy or engagement between groups, we can determine which model delivers better results before making a full-scale deployment.”

What is the difference between A/B testing model deployment and Multi-arm Bandit?

This will assess your knowledge of different model evaluation and traffic allocation strategies.

Sample Answer:

“A/B testing splits traffic evenly, gathering data before deciding. Multi-Arm Bandit dynamically allocates more traffic to better-performing models in real-time. The latter speeds up convergence to the best option and reduces exposure to underperforming models.”

What is the difference between Canary and Blue-green strategies of deployment?

This question will help the interviewer assess your knowledge regarding deployment strategy.

Sample Answer:

“In canary deployment, a new model is released to a small portion of users first. In blue-green, two environments run in parallel, and traffic is switched fully once the new version is ready. Canary is gradual, blue-green is a switch.”

Why would you monitor feature attribution rather than feature distribution?

This will help evaluate your understanding of monitoring model interpretability.

Sample Answer:

“Feature attribution shows which inputs influence predictions most, helping detect shifts in model behaviour even when distributions look stable. It can uncover subtle drifts in decision-making that raw feature statistics might miss.”

What are the ways of packaging ML Models?

Your answer to this question will help the interviewer assess your deployment readiness knowledge.

Sample Answer:

“Models can be packaged as Docker containers, Python packages, REST APIs, or saved in formats like ONNX or TensorFlow SavedModel. The choice depends on deployment environment, scalability needs, and compatibility with serving infrastructure.”

Step into tomorrow where machines think faster than ever. Sign up for our Artificial Intelligence & Machine Learning Course and be part of the revolution.

How do you create Infrastructure in MLOps?

This will help assess your ability to design, provision and manage infrastructure that supports the end-to-end MLOps workflow.

Sample Answer:

“I use Infrastructure-as-Code tools like Terraform or CloudFormation to define compute, storage, and networking resources. This ensures consistent, repeatable environments for data processing, training, and deployment, supporting scalability and collaborative development in MLOps workflows.”

How do you deploy ML models on the Cloud?

This question will help spotlight how familiar you are with Cloud deployment processes.

Sample Answer:

“I package the model, upload it to a Cloud service like AWS SageMaker, Azure ML, or GCP AI Platform, and configure an endpoint. I set scaling rules, secure access, and integrate monitoring to keep the deployment stable and responsive.”

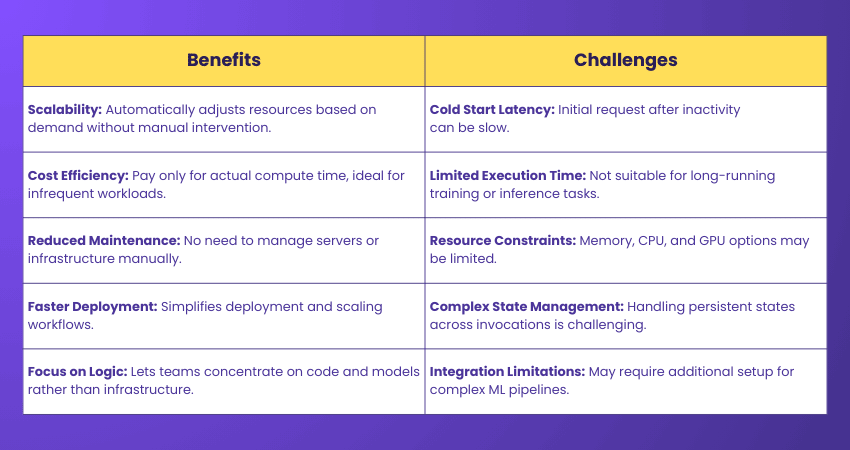

What are the benefits and challenges of using serverless architectures for ML model deployment?

This question is designed to evaluate your understanding of serverless trade-offs.

Sample Answer:

“Serverless offers scalability, lower maintenance, and cost efficiency for sporadic workloads. Challenges include cold start latency, limited execution time, and restricted resources. It’s great for lightweight inference tasks but not always ideal for heavy real-time model serving.”

How do you manage computational resources for ML model training in a Cloud environment?

This question will help the interviewer check your resource optimisation skills.

Sample Answer:

“I choose the right instance types, use autoscaling, and leverage spot instances for cost savings. I also optimise batch sizes, parallelise workloads, and monitor GPU/CPU utilisation to avoid over-provisioning while ensuring training completes efficiently.”

How do you ensure data security and compliance when deploying ML models in the Cloud?

This will evaluate your knowledge of safeguarding data and meeting regulatory requirements.

Sample Answer:

“I enforce encryption for data at rest and in transit, manage access via IAM policies, and comply with relevant regulations like GDPR. I also audit logs regularly and follow secure deployment practices to minimise risks.”

Unlock the power of cognitive computing and explore how AI mimics human thought. Sign up for our Cognitive Computing Training now!

What strategies do you use for managing Cloud costs associated with ML model training and deployment?

This is one of the most important MLOps Interview Questions because it’s intended to test your strategies for cost-optimisation.

Sample Answer:

“I use reserved or spot instances, schedule shutdowns for idle resources, and optimise pipelines for efficiency. Tracking costs with monitoring tools helps identify waste, while selecting the right compute for each task avoids unnecessary expenses.”

What is the difference between edge and Cloud deployment in MLOps?

This question will assess your understanding of Edge and Cloud deployment approaches.

Sample Answer:

“Edge deployment runs models locally on devices for low-latency responses without constant connectivity. Cloud deployment offers scalability and centralised management but depends on internet access. The choice depends on use case, latency needs, and data sensitivity.”

How do you ensure high availability and fault tolerance for ML models in production?

This question will check your strategies for maintaining the continuous availability and resilience of ML models.

Sample Answer:

“I use load balancing, multi-region deployment, and redundant instances. Health checks and automatic failover ensure uptime. Combining these with robust monitoring helps maintain availability even during hardware failures or traffic spikes.”

Describe a time you had to troubleshoot a model in production. What steps did you take?

This question will the interviewer assess your problem-solving in real-world scenarios.

Sample Answer:

“A model’s accuracy dropped suddenly. I checked logs, monitored data inputs, and discovered a schema change in upstream data. I updated preprocessing, retrained the model, and implemented schema validation to prevent similar issues.”

How do you handle model versioning in a collaborative environment?

This will assess your ability to manage and track model versions effectively while enabling seamless collaboration.

Sample Answer:

“I use a shared model registry like MLflow or SageMaker Model Registry. Every model version is tagged with metadata, performance metrics, and associated datasets. This ensures everyone can track changes and roll back when needed.”

Describe a challenging situation where you had to work with cross-functional teams. How did you handle effective collaboration?

This question is intended to evaluate how well you handle collaboration with cross-functional teams.

Sample Answer:

“In one project, engineers, data scientists, and product managers had conflicting priorities. I scheduled regular syncs, clarified goals, and documented decisions. Creating a shared understanding helped align efforts, leading to a smooth deployment despite initial friction.”

How do you approach scaling ML models to handle increased user load?

This question is designed to evaluate your strategies regarding scalability in ML models.

Sample Answer:

“I use auto-scaling clusters, optimise inference code, and batch predictions when possible. Deploying models on GPU-enabled instances or using distributed serving frameworks like TensorFlow Serving ensures performance stays high while handling spikes in user traffic efficiently.”

Discover how AI can elevate your project game and help you Lead smarter. Sign up for our Artificial Intelligence (AI) For Project Managers Course now!

How do you handle stakeholder expectations when model performance does not meet initial predictions?

This question will help gauge your communication skills and ability to manage stakeholder expectations.

Sample Answer:

“I share clear performance metrics, explain influencing factors like data quality, and propose actionable improvements. Keeping stakeholders involved in trade-off decisions builds trust, even if performance falls short initially. Transparency turns setbacks into collaborative problem-solving opportunities.”

Can you describe a time when you had to balance technical debt with delivering a project on time?

This question will assess your decision-making approach under constraints.

Sample Answer:

“In one case, we used a simpler model to meet a launch deadline, noting improvements for later. I documented the debt clearly and scheduled refactoring post-release, ensuring delivery while planning for long-term stability and performance.”

How would you implement a CI/CD pipeline for Machine Learning models?

This will assess your ability to design and automate a CI/CD pipeline that streamlines deployment of Machine Learning models.

Sample Answer:

“I integrate version control, automated testing, model validation, and deployment scripts. Using tools like Jenkins or GitHub Actions, the pipeline triggers on code or data changes, ensuring models are tested and deployed consistently with minimal manual steps.”

What are the best practices for managing data in MLOps?

Your answer to this question will help the interviewer assess your understanding of data governance.

Sample Answer:

“I maintain versioned datasets, validate data quality before training, and automate preprocessing steps. Using tools like DVC or Delta Lake ensures consistency, while strong governance policies protect sensitive information and ensure compliance with regulations.”

How do you handle feature engineering and preprocessing in an MLOps pipeline?

This question is intended to evaluate your pipeline integration skills.

Sample Answer:

“I build reusable, version-controlled preprocessing scripts integrated with the training pipeline. This ensures features generated during training match those used in production, reducing inconsistencies and making retraining easier when data changes.”

How do you implement model monitoring in a production environment?

This question will help evaluate your approach to tracking model performance in production.

Sample Answer:

“I track key metrics like accuracy, latency, and error rates. For drift detection, I monitor input data distributions. Automated alerts trigger investigation when thresholds are breached, ensuring quick responses to performance degradation.”

How do you ensure the reproducibility of experiments in Machine Learning?

This will assess your ability to ensure ML experiments can be exactly replicated across runs and environments.

Sample Answer:

“I store code, configurations, datasets, and environment dependencies in version control. Experiment tracking tools like MLflow log parameters and results, making it easy to rerun and validate experiments exactly as before.”

What are the challenges of operationalising Machine Learning models?

This will help assess your awareness of practical hurdles pertaining to ML models.

Sample Answer:

“Key challenges include handling data drift, managing resource costs, ensuring scalability, and integrating with existing systems. Coordinating between Data Science and engineering teams is critical, as is maintaining reproducibility and regulatory compliance.”

How do you implement model retraining in an automated MLOps pipeline?

This question is intended to evaluate how well you about automation for continuous improvement.

Sample Answer:

“I set up triggers based on data drift or performance metrics. The pipeline pulls new data, preprocesses it, retrains the model, runs validation tests, and deploys only if results meet predefined thresholds.”

Learn how AI can supercharge your Business Analysis approach with our comprehensive Artificial Intelligence (AI) for Business Analysts Training - Register now!

Tips for Preparing for an MLOps Interview

The following list of proven tips will help you stand out from your competition and ace your next MLOps Interview Questions:

Understand the MLOps Lifecycle

Be ready to walk through every stage of an ML model’s journey, from data collection and preprocessing to deployment and monitoring. Share real examples and the tools you’ve used at each step.

Emphasise Collaboration

MLOps thrives on teamwork between Data Science and operations. Discuss your cross-functional experiences and how you ensure smooth communication and workflow across teams.

Showcase Your Toolset

Get comfortable with tools like Docker, Kubernetes, MLflow, and TensorFlow Extended (TFX). Explain how you’ve used them to optimise MLOps processes. Mention how your choice of tools improved efficiency, scalability, or reliability in past projects.

Focus on Reproducibility

Stress the value of reproducibility and describe how you achieve it using version control, consistent environments and detailed documentation. Provide examples of how reproducibility saved time or helped troubleshoot issues in your previous work.

Highlight Monitoring and Maintenance

Share how you track model performance, detect data drift, and implement automated retraining to keep models reliable over time. Explain how proactive monitoring has helped maintain stability and business value in production environments.

Top Rated Course

Top Rated Course

If you wish to make any changes to your course, please

If you wish to make any changes to your course, please