We may not have the course you’re looking for. If you enquire or give us a call on 01344203999 and speak to our training experts, we may still be able to help with your training requirements.

We ensure quality, budget-alignment, and timely delivery by our expert instructors.

Software Testing plays a critical role in ensuring the quality, functionality, and reliability of applications across modern software development environments. As organisations continue to prioritise quality assurance, professionals with strong Software Testing knowledge and problem-solving skills remain in high demand.

In this blog, you will explore the top Software Testing interview questions and answers covering fundamental, intermediate, and advanced concepts. It helps candidates strengthen technical understanding, improve interview preparation, and build confidence for Software Testing and quality assurance roles.

Table of Contents

1) Basic Software Testing Interview Questions for Freshers

2) Intermediate Software Testing Interview Questions

3) Software Testing Interview Questions for Experienced

4) Conclusion

Basic Software Testing Interview Questions for Freshers

Basic Software Testing interview questions help freshers understand core testing concepts, methodologies, and quality assurance practices commonly asked during interviews. These questions focus on foundational knowledge required to begin a career in Software Testing and quality assurance. The following Software Testing interview questions for freshers cover essential concepts frequently discussed in entry-level interviews:

1) What is Software Testing?

Software Testing is the process of evaluating and verifying that a software application or system functions as expected. It involves executing the software to identify defects, ensuring that it meets specified requirements, and checking if it operates correctly without errors.

2) Why is Software Testing Necessary?

Software Testing is necessary to ensure the quality and reliability of the software product. It helps identify bugs, improve functionality, and prevent costly errors after release. Testing ensures that the product meets the user's needs and complies with regulatory and performance requirements.

3) What are the Different Types of Software Testing?

There are several types of Software Testing, including Functional Testing (like Unit Testing, Integration Testing, System testing, Smoke Testing and Sanity Testing), Non-functional Testing (like Performance Testing, Security Testing), Manual Testing, and Automated Testing. Each type focuses on different aspects of the software’s functionality and performance.

4) What is a Test Case?

A test case is a set of specific conditions or variables under which a tester determines that one or more aspects of the system work properly. Test Scenario vs Test Case typically includes input data, execution steps, and expected results, which ensure that the software is consistent with the requirement.

5) What is Exploratory Testing?

Exploratory Testing is a testing approach where testers actively explore the system without predefined test cases. The tester interacts with the application, discovering defects and issues while learning the application’s behaviour in real-time.

6) What is End-to-end Testing?

End-to-end Testing is the validation of the entire flow of the application from start to end. It proves that the integrated components of the system work as expected and meet the requirements of the users by simulating real-world scenarios.

7) What is a Test Report?

A test report is a document summarising the activities and results of testing. It's usually an information report giving specifics about which tests were run, the outputs, defects identified, and coverage achieved as a whole. This then helps the stakeholders make some decisions of quality regarding the software.

8) What is a Test Suite?

A test suite is a collection of test cases aimed at testing a functionality or feature of a software application. It organises tests in a meaningful order and ensures that different scenarios are cared for to ensure full coverage.

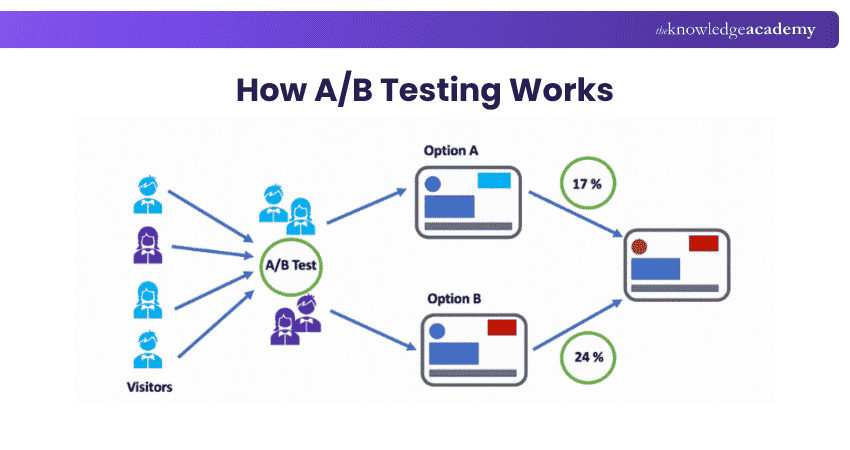

9) What is A/B Testing?

A/B Testing refers to the methodology whereby you compare two versions, A and B, of a feature or a product to identify which performs better in relation to user engagements or other metrics. In most cases, it comes under UX Testing to fine-tune interfaces that are closer to delivering better user results.

10) What is Dynamic Testing?

Dynamic Testing is the execution of software under study with analysis in a run-time environment. This is helpful to detect defects by validating the response of the software in relation to input and to ensure that it functions according to design.

11) What is a Test Plan and Contents A vailable in a Test Plan?

A test plan is a well-planned document that summarises the goals, scope, methodology, and means of testing required. Its contents typically consist of the test strategy, environment, test objectives, test deliverables, resource planning, test schedule, and risk analysis.

12) What is a Test scenario?

A test scenario is a high-level description of what needs to be tested. This describes a functionality or a feature of the system that needs verification and ensures, in a way, that all the important functionalities are covered within testing.

13) What is a Test Bed?

A test bed is something that has been configured to run tests. It encompasses the hardware and software, a network setup, and even other items needed to conduct the tests. The test bed is an environment that mimics the real-world environment to have accuracy in the testing process.

14) What is Test Data?

Test data is input supplied to the software in the testing process. It is designed to simulate real situations, and so it is used for validating whether the software deals with its data correctly, with an output that matches what was expected.

15) What is a Test Harness?

A test harness is a set of tools and test data for the automation of the testing process. A test harness controls the execution of tests, compares actual with expected outcomes, and reports on the performance of the software.

Develop advanced Automation Testing skills to design, implement, and manage effective test automation solutions with the ISTQB Advanced Test Automation Engineer Course- Join now

16) What is Test Closure?

Test closure refers to the conclusion of test activities. This would include final reporting, that all tests planned are executed or executed, analyses of the results, lessons learned are logged and test artifacts archived for later use.

17) What are the Tasks of Test Closure Activities in Software Testing?

Test closure activities include completing all the test execution, raising, and producing the test report, running postmortem meetings where one discusses what lessons were learned, ensuring defects are closed, and archiving all the documents related to test.

18) What are the Tasks of Test Deliverables in Software Testing?

Test deliverables include documents and reports that are shared with stakeholders at different stages of testing. They typically include test plans, test cases, test scripts, test data, defect reports, test summary reports, and test closure reports.

19) What is Manual Testing?

Manual Testing Methodologies Guide explains that Manual Testing is the process of manually executing test cases without the use of automation tools. Testers manually interact with the software to identify defects and verify that it functions as expected, representing the Manual side of the Manual and Automated Testing approach.

20) What are the levels of testing?

The levels of testing include Unit Testing (testing individual components), Integration Testing (testing interactions between components), System Testing (testing the entire system), and Acceptance Testing (validating the system against user requirements).

21) What are the Advantages of Manual Testing?

The advantages of Manual Testing include flexibility in exploring edge cases, immediate feedback, and the ability to test usability and User Experience effectively. It is particularly useful for small projects and for tests that require human observation.

22) What are the Disadvantages of Manual Testing?

The disadvantages of Manual Testing include being time-consuming, prone to human error, and less efficient for large-scale or repetitive tasks. It is also difficult to perform in cases where extensive Regression Testing is needed.

23) What is the Process of Manual Testing?

The process of Manual Testing includes analysing requirements, creating test plans, designing test cases, executing the tests, logging defects, and reporting results. Each step is manually performed by testers to verify the system’s functionality.

24) What is Boundary Testing in Manual Testing?

Boundary Testing focuses on testing the limits of input values to verify that the software handles edge cases correctly. For example, if an input range is between 1 and 100, Boundary Testing checks values like 1, 100, 0, and 101.

25) What is Automation Testing?

Automation Testing involves using tools and scripts to automate the execution of test cases. It is especially useful for repetitive tasks and Regression Testing, helping to save time and reduce human error.

26) What are the Advantages of Automation Testing?

The advantages of Automation Testing include faster execution, increased accuracy, reusability of test scripts, and the ability to perform tests across multiple platforms and browsers simultaneously. It is ideal for large projects and Continuous Integration.

27) What are the Disadvantages of Automation Testing?

The disadvantages include high initial investment in tools and setup, a very high maintenance cost for test scripts, involves testing User Experience or Exploratory Testing in only a few cases, and does not perform well when the project is small scale with minimal needs for regression. Accurate software testing documentation can mitigate some of these challenges by providing clear guidelines for maintenance.

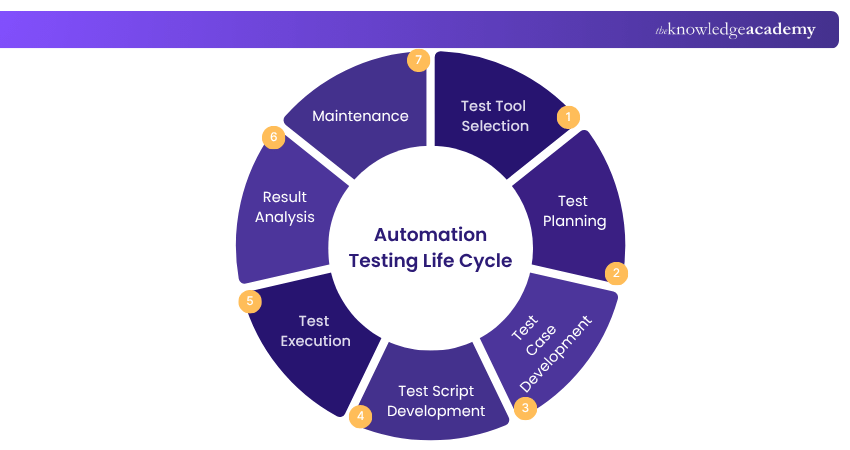

28) What is the Automation Testing life cycle?

The Automation Testing life cycle includes test tool selection, test planning, test case development, test script development, test execution, result analysis, and maintenance. Each phase ensures structured and efficient Automation Testing.

29) What is Test Management?

Test Management involves planning, executing, and monitoring the testing process to ensure quality and consistency. It includes organising test cases, managing resources, tracking defects, and reporting progress to ensure that testing aligns with project goals.

30) What is Big Bang approach?

The Big Bang approach is an integration testing method where every component or module of a system and tests them together. It is straightforward, yet it makes it unsafe for isolated module weaknesses are not easily identifiable.

31) What is Top-Down approach?

The Top-Down approach is a testing strategy where testing starts from the topmost modules and works its way down. Higher-level modules are tested first. If needed, lower-level modules are simulated using stubs in the initial stages of testing.

Intermediate Software Testing Interview Questions

32) What is the Difference Between Verification and Validation in Software Testing?

Verification deals with the question of doing the right thing, that is, the right product is being constructed properly to the expected specifications. Validation deals with the question of building the right product: the output delivered does indeed meet the needs of the user. Verification is more inwardly focused, while validation is more concerned with the user.

33) What is the Difference Between Smoke Testing and Sanity Testing?

Smoke Testing is more of a general, preliminary test of the software to check if the most critical functions are working. It is more like a health check of the application. Sanity Testing is an intensive test conducted after minor changes or bug fixes are made to the code. This ensures that the specific issues that prompted the changes have been properly addressed.

34) What is the Difference Between Black Box Testing and White Box Testing?

Black Box Testing is that kind of testing of functionality of software in a 'Black Box' where the internal working of it is unknown. White Box Testing, on the other hand, is the testing of the internal code structure. Testers for White Box Testing need to have knowledge of programming, but not so for Black Box Testing.

Take part in the comprehensive ISTQB Software Testing Foundation Training today and achieve the first step towards becoming a certified software tester!

35) What is Regression Testing?

Regression Testing involves re-running previously executed test cases to ensure that recent changes or bug fixes have not introduced new defects. It is aimed at maintaining the stability of the system as changes are updated.

36) What is the Difference Between Retesting and Regression Testing?

Retesting involves testing specific test cases that failed during the previous execution to verify that the bugs have been fixed. Regression Testing, however, is done to ensure that the recent changes have not affected existing functionality.

37) What is the Difference Between Manual Testing and Automation Testing?

Manual Testing is a test case done by a person with the help of tools, whereas Automation Testing depends upon a script and tool for testing. Although Manual Testing is slow and not that predictable, yet Automation Testing accelerates and has a better repeatability of things.

38) What is grey box testing?

Grey Box Testing is a combination of both Black Box and White Box Testing. The testers are partially aware of the internal flow of the application and then design better test cases with that knowledge. It ensures that testing balances functional as well as structural evaluation.

39) What is the Difference Between Quality Assurance vs Quality Control in testing?

Quality Assurance (QA) focuses on improving the processes to deliver a quality product. It’s proactive and process oriented. Quality Control (QC) involves identifying defects in the final product and ensuring it meets the required quality standards. It’s reactive and product oriented.

40) What is the Difference Between Test Scripting and Test Automation?

Test scripting involves writing detailed, step-by-step instructions that testers manually follow. Test automation uses scripts or tools to automatically execute those instructions without human intervention, reducing manual effort and increasing efficiency.

41) What is the Difference Between Record and Playback, and Scripting in Automation Testing?

Record and playback is a process where the recorded user actions are played back to verify the application. Scripting refers to customising test script writing for automatic execution. Record and playback is less flexible but easier, whereas scripting has more control over customisation.

42) What are the Different Types of Frameworks used in Automation Testing?

Different types of Automation Testing frameworks include Modular Testing, Data-driven Testing, Keyword-driven Testing, Hybrid Testing, and Behaviour-driven Development (BDD) frameworks. Each framework offers a different approach to structuring and organising automated test scripts.

43) What is Continuous Integration in Automation Testing?

Continuous Integration (CI) is the practice of frequently integrating code changes into a shared repository, followed by Automated Testing to identify defects early in the development process. It helps ensure that the software is always in a testable state.

44) What is Agile Testing?

Agile Testing is a practice that follows the principles of Agile Development, where testing is integrated into every stage of the development process. It emphasises continuous testing, collaboration, and adapting to changing requirements, with a focus on delivering high-quality software quickly.

45) What are the key Differences Between Agile Testing and Traditional Testing?

In Traditional Testing, testing is usually carried towards the end of the entire development. Agile Testing occurs throughout the duration of the development cycle. Agile Testing encourages team spirit among developers, testers, and stakeholders. In Traditional Testing, it is more sequential, and the roles are isolated.

46) What is the Role of a Tester in Agile Development?

In Agile Development, the tester collaborates with both developers and stakeholders to eventually produce a complete process. Their role in this consists of writing and executing test cases, participating in the daily stand-up meeting, reviews of user stories, automation of tests, and continuous quality in the entire project.

47) What is a User Story in Agile Testing?

A user story is a simple description of a feature or function written from the perspective of the end-user. It outlines what the user wants to achieve with the software and serves as the basis for developing and testing specific features in Agile projects.

48) How do you ensure Test Coverage in Agile Testing?

Test coverage in Agile Testing is ensured through the constant review of user stories, test cases writing that will comprehensively cover all acceptance criteria, automated tests for regression, and regular interaction with the development team to ascertain whether all functionalities have been covered by tests.

49) What is the Importance of Test Automation in Agile Testing?

Test automation is the heart of Agile Testing because it allows Continuous Integration and short-release cycles. Based on the test automation approach, you can receive immediate feedback from code changes and avoid breaking already existing functionality to increase the efficiency of Regression Testing. Much like how Exit Interview Questions provide valuable feedback on employee experiences, test automation gives developers crucial insights to ensure the software’s stability and performance.

50) What is the Difference Between Agile Testing and Agile Development?

Agile Development focuses on the overall process of delivering software iteratively, while Agile Testing specifically deals with testing the software at each stage of the Agile lifecycle. Agile testers ensure that every iteration produces a quality product that meets user needs.

51) What are the Steps Involved in Conducting Performance Testing?

The steps involved in Performance Testing include identifying Performance Testing goals, creating test scenarios, configuring the test environment, executing tests, analysing results, and fine-tuning the system for better performance based on the test outcomes.

52) What are the Different Types of Performance Testing?

The different types of Performance Testing include Load Testing (testing the system under expected load), Stress Testing (testing beyond normal limits), Endurance Testing (testing over an extended period), and Spike Testing (testing with sudden increases in load).

53) What are the key Performance Metrics in Performance Testing?

Key performance metrics are system response time, throughput-determined amount of data processed in a certain time, utilisation of CPU and memory, and error rates. These are the metrics with which bottlenecks may be detected and ensured that the system can meet certain workloads.

Elevate your career as a Software Test Manager by signing up for the ISTQB Advanced Software Test Manager Training now.

54) What are the Different Types of Security Testing?

Different types of Security Testing include vulnerability scanning, Penetration Testing, security auditing, Ethical Hacking, and risk assessment. Each type focuses on identifying weaknesses and potential threats in the system to safeguard it from attacks.

55) How do you Approach Security Testing?

This question evaluates your knowledge of Security Testing strategies.

Here's how you can answer this question:

“I would approach Security Testing by first identifying the critical assets and sensitive data in the system. Then, I would perform vulnerability assessments, penetration tests, and code reviews to identify potential threats. I would also validate authentication, encryption, and access control mechanisms.”

56) What are some common Security Testing tools?

Common Security Testing tools include OWASP ZAP, Burp Suite, Nessus, Nmap, and Metasploit. These tools help identify vulnerabilities, perform Penetration Testing, and evaluate the overall security posture of the system.

57) What are the key Differences Between API Testing and UI Testing?

API Testing focuses on testing the backend logic of an application by verifying the interactions between different software components. UI Testing, on the other hand, tests the graphical interface to ensure the user interface behaves as expected.

58) What tools can be used for API and Web Services Testing?

Common tools for API and Web Services Testing include Postman, SoapUI, Rest Assured, and JMeter. These tools help in sending requests to APIs, validating responses, and ensuring the API works correctly.

59) What are some common types of tests performed in API and Web Services Testing?

Common types of tests performed in API Testing include Functionality Testing, Reliability Testing, Performance Testing, Security Testing, and validation of data formats (e.g., JSON, XML). These tests ensure the API is robust and performs as expected.

60) How do you handle Authentication and Authorisation in API Testing?

This question examines your knowledge of security in API Testing.

Here's how you can answer this question:

“I would handle authentication by testing whether the API enforces proper authentication mechanisms like OAuth or API keys. For authorisation, I would test that users only have access to the data or functionality they are allowed, ensuring that unauthorised users cannot perform restricted actions.”

61) How do you handle Data-driven Testing in API Testing?

This question tests your understanding of Data-driven Testing.

Here's how you can answer this question:

“In data-driven API Testing, I would set up test cases with different input data to ensure that the API behaves correctly with various inputs. This approach helps test multiple scenarios by using parameterised data and ensures that the API works for different data sets.”

62) What is the Concept of Data-Driven Testing?

Data-driven Testing is a testing methodology where test data is separated from the test script. One script runs under multiple runs and inputs. It improves test coverage and ensures that a system can accept a wide range of inputs.

63) How does Agile Testing Address Changing Requirements?

Agile Testing embraces changing requirements by involving testers early in the development process and maintaining continuous communication with stakeholders. Testing is iterative, allowing for flexibility and quick adaptation to new or modified requirements.

64) How does Agile Testing Promote Collaboration and Communication?

Agile Testing promotes collaboration by involving the entire team in the testing process. Testers work closely with developers, product owners, and stakeholders to ensure that requirements are understood and tested thoroughly. Daily stand-ups and regular feedback loops ensure continuous communication.

Software Testing Interview Questions for Experienced

65) What is the difference between Positive Testing and Negative Testing?

Positive Testing involves testing the system by providing valid data to verify that the system behaves as expected. Negative Testing, on the other hand, involves inputting invalid or unexpected data to ensure the system handles errors gracefully without crashing.

66) What is System Testing?

System Testing is the process of testing the complete and integrated software application. It validates the system against its requirements to ensure that all components function correctly together. It includes functional and non-functional tests to ensure the system meets the specified criteria.

67) How do you prioritise Defects in Manual Testing?

This question evaluates your approach to handling defects in a testing process.

Here's how you can answer this question:

“I prioritise defects based on their severity and impact on the system. Critical defects that cause system crashes or prevent core functionalities from working are prioritised higher. I also consider business impact and the likelihood of the defect occurring to ensure that high-risk issues are addressed first.”

68) How do you ensure Test Coverage in Manual Testing?

This question tests your ability to ensure comprehensive testing.

Here's how you can answer this question:

“To ensure test coverage, I start by thoroughly understanding the requirements and mapping test cases to each requirement. I also use traceability matrices to track whether all functionalities are covered.”

Learn how to optimise your application's performance by mastering the Web Application Performance Testing with JMeter Course. Sign up now!

69) How do you Track and Report Test Progress in Test Management?

This question assesses your understanding of reporting mechanisms in Test Management.

Here's how you can answer this question:

“I track test progress using tools like JIRA, TestRail, or Excel sheets to monitor executed, passed, failed, or blocked test cases. I also refer to Jira Interview Questions to stay updated on best practices for utilizing JIRA effectively in test management. Additionally, I generate test progress reports that include key metrics such as pass/fail rates, defect status, test execution progress, and test coverage percentage. These reports are shared with stakeholders regularly.”

70) How do you Manage and Prioritise Defects in Test Management?

This question evaluates your approach to Defect Management.

Here's how you can answer this question:

“In Test Management, I manage defects by logging them in a defect tracking tool, assigning them to the relevant developers, and tracking their status. I prioritise defects based on severity, business impact, and risk. Critical defects are resolved first, followed by less severe ones.”

71) How do you Ensure Continuous Improvement in Test Management?

This question assesses your approach to improving the testing process.

Here's how you can answer this question:

“I ensure continuous improvement by regularly conducting retrospectives after test cycles to evaluate what worked and what didn’t. I analyse metrics like defect detection efficiency and test coverage to identify areas of improvement.”

72) What is the significance of performance tuning in Performance Testing?

This question tests your understanding of optimising system performance.

Here's how you can answer this question:

“Performance tuning is critical because it helps identify and resolve bottlenecks in the system that affect speed, scalability, and stability. By analysing performance metrics, such as response time and CPU/memory usage, I can adjust the system’s configuration to improve overall performance.”

73) How do you measure response time in Performance Testing?

Response time is measured by recording the time taken for the system to respond to a user’s request. This can be done using Performance Testing Tools like JMeter or LoadRunner, which track how long it takes for each request to be processed and a response to be returned.

74) What is the importance of secure data transmission in Security Testing?

Secure data transmission is crucial to protect sensitive information from being intercepted during transmission between client and server. Security Testing ensures that encryption protocols like SSL/TLS are properly implemented, and data is securely transmitted over the network, preventing unauthorised access.

Boost your testing toolkit! Our Mobile & Database Testing Course equips you with in-demand skills. Join now for hands-on learning.

75) How do you address security vulnerabilities identified in Security Testing?

This question assesses your approach to handling security issues.

Here's how you can answer this question:

“After identifying security vulnerabilities, I would classify them based on severity and urgency. Critical vulnerabilities would be addressed immediately, working with the development team to implement fixes. Regular Follow-up Testing, including penetration tests, would be conducted to ensure that the vulnerabilities are properly patched.”

76) What are some challenges in Performance Testing?

Challenges in Performance Testing include simulating real-world traffic accurately, creating a representative test environment, and identifying the root cause of performance issues in complex systems. Another challenge is analysing large amounts of performance data and separating performance bottlenecks from other system issues. The Benefits of Root Cause Analysis help pinpoint specific problems, enabling more targeted and effective solutions.

77) How do you determine the appropriate load for Load Testing?

This question evaluates your understanding of Load Testing preparation.

Here's how you can answer this question:

“I determine the appropriate load by analysing the system’s expected user base and usage patterns. I consult with stakeholders to define acceptable performance thresholds and simulate load based on peak usage scenarios.”

78) What is the difference between Load Testing and Stress Testing?

Load Testing checks how the system performs under expected user loads, ensuring it functions properly during normal usage. Stress Testing pushes the system beyond its capacity to find the breaking point and evaluate how it handles extreme conditions, such as excessive traffic or resource constraints.

79) How do you handle error handling and Exception Testing in API Testing?

This question assesses your knowledge of testing for robustness in APIs.

Here's how you can answer this question:

“I handle error handling and Exception Testing in APIs by sending invalid or unexpected inputs to test how the API responds. This helps ensure that the API can gracefully handle errors, such as missing fields, incorrect data types, or authentication failures, and return appropriate error messages.”

Take the next step in your Software Testing journey with the Manual Testing Training to gain the knowledge and skills you need for success. Sign up today!

80) What are some challenges in Security Testing?

Challenges in Security Testing include identifying all potential vulnerabilities, keeping up with evolving security threats, testing across different environments, and ensuring compliance with security regulations. Additionally, Security Testing often requires specialised tools and expertise, making it more resource-intensive.

81) How do you ensure secure authentication and authorisation in Security Testing?

This question tests your approach to ensuring security in user access control.

Here's how you can answer this question:

“I ensure secure authentication by verifying that users are required to provide valid credentials, such as usernames, passwords, or Multi-factor Authentication (MFA), before accessing the system. For authorisation, I test to ensure that users have access only to the resources and actions that they are permitted to use.”

82) What are the common security vulnerabilities that Security Testing helps to uncover?

Security Testing uncovers common vulnerabilities such as SQL injection, Cross-site Scripting (XSS), Cross-site Request Forgery (CSRF), insecure data storage, weak authentication mechanisms, and improper access controls. Identifying and mitigating these vulnerabilities helps secure the system from attacks.

83) What are the key objectives of Security Testing?

The key objectives of Security Testing are to identify vulnerabilities that could be exploited by attackers, ensure the confidentiality, integrity, and availability of data, and verify that security controls, such as encryption and access control mechanisms, are functioning as intended to protect sensitive information.

84) How do you ensure test coverage in Test Management?

This question assesses your ability to manage comprehensive testing.

Here's how you can answer this question:

“I ensure test coverage in Test Management by mapping test cases to the software requirements and using a traceability matrix to ensure that all functionalities are covered. I also monitor test execution progress and conduct Exploratory Testing to cover any edge cases that might be missed.”

85) How do you Prioritise Test Cases in Test Management?

This question evaluates your approach to organising test execution.

Here's how you can answer this question:

“I prioritise test cases based on their criticality, risk, and the impact on business functionality. High-priority test cases that verify core functionalities are executed first, followed by medium and lower-priority cases that test less critical or edge features.”

Master Automation with Test Complete! Boost your testing efficiency and accelerate your career with our expert-led Automation Testing using Test Complete Training today.

86) How do you ensure effective communication within the Test Management process?

This question examines your approach to collaboration and communication.

Here's how you can answer this question:

“I ensure effective communication by maintaining regular updates through status meetings, sharing test progress reports with stakeholders, and using collaborative tools like JIRA or Slack for defect tracking and discussion. Clear documentation and regular feedback loops also help keep everyone on the same page.”

87) What are the essential components of a test plan?

A test plan typically includes the test strategy, test objectives, scope, resources, schedule, test deliverables, entry and exit criteria, risk assessment, and the tools and environment required for testing. It outlines how testing will be carried out and helps guide the testing team through the process.

88) How do you measure response time in Performance Testing?

This question assesses your understanding of measuring system performance.

Here's how you can answer this question:

“I measure response time using Performance Testing tools like JMeter or LoadRunner, which track the time taken for each request sent to the system to receive a response. This metric helps assess how quickly the system responds under different load conditions and scenarios.”

89) How do you ensure Continuous Improvement in Test Management?

This question evaluates your approach to refining testing processes.

Here's how you can answer this question:

“Continuous improvement is ensured by analysing test metrics, gathering feedback from the testing team and stakeholders, and conducting retrospectives after each test cycle. I also implement lessons learned from past projects, optimise test automation, and regularly update test plans and strategies to align with evolving project needs.”

90) How do you handle error handling and Exception Testing in API Testing?

This question assesses your understanding of handling errors in APIs.

Here's how you can answer this question:

“I handle error handling in API Testing by sending invalid or unexpected inputs to test how the API reacts to different scenarios. This includes testing for missing parameters, incorrect data formats, and security breaches to ensure that the API handles errors gracefully and returns meaningful error messages.”

91) What is Performance Testing?

Performance Testing evaluates how a system performs under different load conditions. It assesses speed, scalability, stability, and resource usage to ensure the software can handle the expected number of users, transactions, or data volume without compromising functionality.

92) What are some common Performance Testing tools?

Common Performance Testing tools include JMeter, LoadRunner, Apache Bench, Gatling, and NeoLoad. These tools help simulate user loads, measure system performance, and identify bottlenecks in the application’s performance.

93) What is API Testing?

API Testing involves testing the backend logic and functionality of an application by sending requests to its API endpoints and verifying the responses. It ensures that the API behaves as expected, handles requests correctly, and interacts with other components seamlessly.

94) How do you Handle request and Response validation in API Testing?

This question assesses your approach to API validation.

Here's how you can answer this question:

“I handle request and response validation by verifying that the API responds with the correct status codes, headers, and body content. I also check for the proper handling of request parameters and ensure the response data is correct, properly formatted (JSON, XML), and meets the expected requirements.”

95) How do you Handle Multiple Requests and Unnecessary Data in REST API Testing?

This question assesses your understanding of optimising REST API performance and efficiency.

Here's how you can answer this question:

“To handle multiple requests efficiently in REST API Testing, I would use pagination, filtering, and sorting techniques to reduce the amount of unnecessary data returned by the API. Additionally, I ensure the API supports batch requests where possible, to minimise the number of network calls and improve performance.”

Master the art of seamless software quality with our Software Testing and Automation Training – Sign up now!

Conclusion

In conclusion, mastering the Software Testing Interview Questions outlined in this blog will give you the confidence and preparation needed to succeed in your next interview. By understanding the key concepts, you’ll be ready to showcase your expertise and passion, setting yourself up to impress employers and advance your career.

Get to understand the key concepts, principles, and requirements of Security Testing with the Open Web Application Security Project Training now!

Frequently Asked Questions

Upcoming Business Analysis Resources Batches & Dates

Date

ISTQB Software Testing Foundation

ISTQB Software Testing Foundation

Mon 8th Jun 2026

Mon 6th Jul 2026

Mon 3rd Aug 2026

Tue 1st Sep 2026

Mon 14th Sep 2026

Mon 28th Sep 2026

Mon 12th Oct 2026

Mon 26th Oct 2026

Mon 9th Nov 2026

Mon 23rd Nov 2026

Mon 7th Dec 2026

Mon 21st Dec 2026

Top Rated Course

Top Rated Course

If you wish to make any changes to your course, please

If you wish to make any changes to your course, please