We may not have the course you’re looking for. If you enquire or give us a call on 01344203999 and speak to our training experts, we may still be able to help with your training requirements.

We ensure quality, budget-alignment, and timely delivery by our expert instructors.

Imagine reading a book where all the words are scattered randomly, with no sense of order. You’d know the vocabulary, but the story would make no sense. That’s exactly the problem transformers face without Positional Encoding, they can understand words, but not the sequence that gives them meaning. Positional encoding is the secret sauce that brings order into chaos, helping transformers read text like a story rather than a jumble of words.

This blog explores what positional encoding is, why it matters, its types, how it works, key traits, and a comparison of absolute vs relative methods.

Table of Contents

1) What is Positional Encoding?

2) Why are Positional Encodings Important?

3) Types of Positional Encodings

4) How Does Positional Encoding Work?

5) Key Characteristics of Effective Positional Encodings

6) Comparing Absolute and Relative Positional Encodings

7) The Future of Positional Encoding

8) Conclusion

What is Positional Encoding?

Positional Encoding is a technique used in transformers and neural networks to inject information about the position of tokens in a sequence. This is different than traditional models like RNNs and LSTMs, which process inputs sequentially, transformers process all tokens in parallel. To preserve the sequence order, this is added to the input embeddings. This allows the model to understand the relative positions of tokens.

Why are Positional Encodings Important?

Positional Encodings are important in transformer models to provide information about the order of tokens in a sequence. Below given are more points related to its significance. Check them out!

1) Enhancing Generalisation

By encoding positional information, models can generalise better to unseen sequences. This allows the model to recognise patterns in sequences of varying lengths. It also helps in adapting to longer or shorter inputs. Eventually, it improves its ability to handle a wide variety of tasks.

2) Preserving Sequence Order

Since transformers do not have any inherent understanding of token order, Positional Encodings preserve this crucial information. This ensures that the model respects the order of tokens. This is critical in language tasks like sentence structure and grammar.

3) Maintaining Contextual Information

Positional Encodings help models understand the relative context of words within a sequence. For example, the word "bank" has different meanings depending on its position in a sentence. Meaning in certain circumstances it can mean a financial institution or the side of a river. In this sector, Positional Encodings help in distinguishing such meanings.

4) Enhancing Generalisation

By giving the model a sense of position, it can generalise more effectively to new sequences. This is done by understanding the order in which things occur. This eventually goes into improving its ability to predict future tokens or outcomes.

Learn different advanced deep learning techniques through the Deep Learning with TensorFlow Training - Join now!

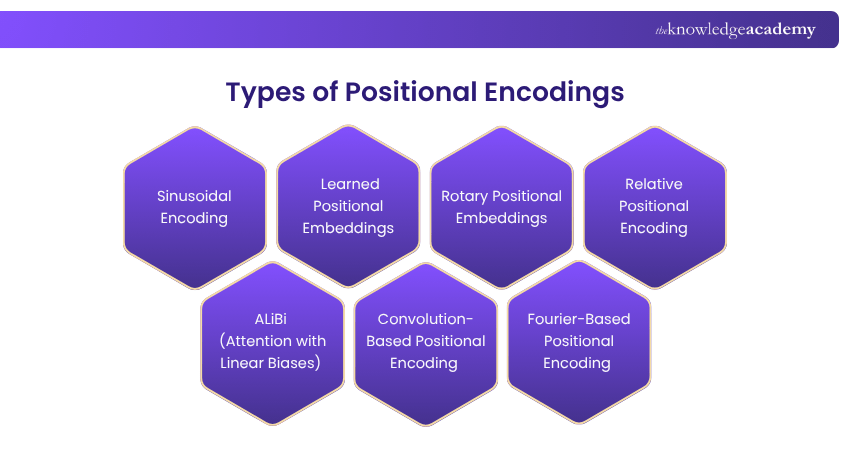

Types of Positional Encodings

There are various methods to encode position in a sequence. Each of them offers different trade-offs in terms of complexity, expressiveness and performance. So, let’s explore the most common types of encodings and get to know about them in detail:

1) Sinusoidal Encoding

Sinusoidal Positional Encoding uses sine and cosine functions to generate continuous, periodic encodings for each position in the sequence. This method allows the model to efficiently generalise to longer sequences. Along with it, it also has the advantage of being deterministic.

2) Learned Positional Embeddings

In this approach, Positional Encodings are treated as learnable parameters. The model learns the best representations for each position during training. This may lead to more flexible and potentially more expressive encodings.

3) Rotary Positional Embeddings (RoPE)

RoPE improves upon traditional Positional Encodings by adding rotational symmetries to the embeddings. This method helps maintain consistency when handling long-range dependencies. All of this goes into making it useful for tasks that require long-term context.

4) Relative Positional Encoding

Relative Positional Encoding focuses on encoding the relative distance between tokens rather than their absolute position in the sequence. This can be more efficient and effective for certain models. This can happen particularly when the sequence length is very long.

5) ALiBi (Attention with Linear Biases)

ALiBi introduces a bias to the attention mechanism that scales linearly with the relative distance between tokens. This method allows for efficient encoding of relative positions. This is done without explicitly adding positional embeddings and reduces computation.

6) Convolution-based Positional Encoding

In this method, convolutional layers are used to encode the position of tokens. Convolution-based encoding can capture spatial relationships in data. All of this may be beneficial for certain tasks like image processing or structured data analysis.

7) Fourier-based Positional Encoding

Fourier-based Positional Encoding uses Fourier transforms to encode positions. It offers benefits for modelling periodic data and can be efficient when handling long-range dependencies. All of this goes into making it suitable for tasks that require global context.

Learn the fundamentals of Cognitive Computing and its real-world applications through the Cognitive Computing Course - Register now!

How Does Positional Encoding Work?

Positional Encoding works by adding a vector to the token embeddings. These represent the position of each token in the sequence. This vector can be generated using sinusoidal functions, learned embeddings, or other methods depending on the encoding strategy.

Once added to the embeddings, these position-aware vectors allow the model to process the sequence. All of this done while maintaining information about the order of tokens. This mechanism is crucial for tasks that require sequential processing. This can include natural language understanding or time-series analysis.

Key Characteristics of Effective Positional Encodings

An effective Positional Encoding should have several important characteristics to ensure the model learns optimal patterns from the input data. This is why you must know all about it in complete detail. Thus, given below are some of the characteristics that will help you know about encodings:

1) Unique Representation for Each Position

Each position in the sequence should have a distinct encoding. All of this ensures that the model can differentiate between positions accurately. This uniqueness allows the model to identify relationships between tokens in different parts of the sequence.

2) Maintains Linear Positional Relationships

Effective Positional Encoding should maintain a linear relationship between tokens in a sequence. This ensures that relative positions are meaningful. All of this is crucial for understanding the structure of sequences, particularly in language tasks.

3) Supports Generalisation to Longer Sequences

Good Positional Encodings should be capable of generalising beyond the training data. This effectively allows the model to handle sequences of varying lengths. All of this is done without losing performance.

4) Deterministic and Learnable by the Model

Positional Encodings should be deterministic as in the case of sinusoidal encoding. It can also be learnable as in the case of learned embeddings. This effectively allows the model to adapt to the data and optimise the encoding for better performance.

5) Adaptable to Multi-Dimensional Structures

Positional Encoding should be flexible enough to work with multi-dimensional data. This can include images or videos, where the concept of position might extend beyond one-dimensional sequences.

Understand how Machine Learning is used to perform clustering in Excel through the AI And ML With Excel Course - Join now!

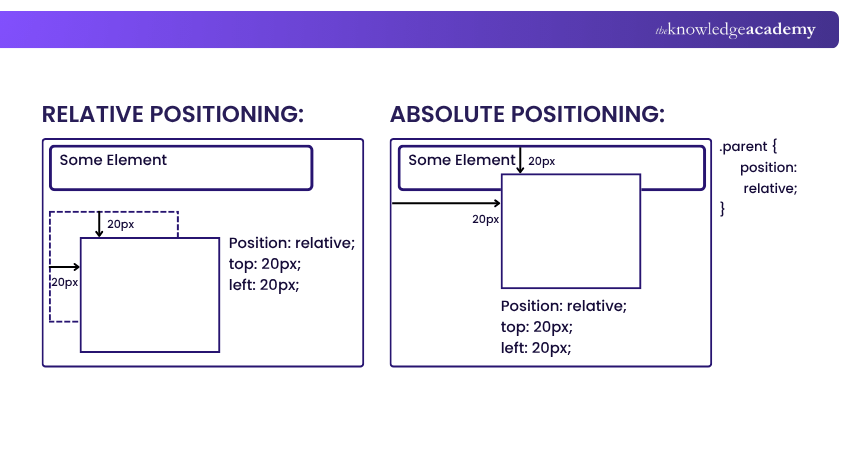

Comparing Absolute and Relative Positional Encodings

Absolute Positional Encodings assign a unique vector to each position in the sequence. On the other hand, relative Positional Encodings focus on the relationship between tokens. This can include the distance between them.

This form of encodings is often easier to implement. However, it can struggle with generalising to sequences of different lengths. Relative Positional Encodings, on the other hand, can be more efficient in capturing relationships between tokens. This is seen in particularly longer sequences.

The Future of Positional Encoding

The future of Positional Encoding lies in improving flexibility and scalability for longer sequences and multi-modal tasks. As models like transformers continue to evolve, there will be a greater emphasis on adaptive, context-aware Positional Encoding methods that can handle diverse data types more efficiently.

Newer designs are exploring more mathematically principled formulations. For example, Hyperbolic Rotary Positional Encoding (HoPE) uses hyperbolic geometry to address limitations in existing methods like the oscillatory behaviour in standard rotary PEs. On top of that, research into hybrid approaches will continue.

Conclusion

Thus, Positional Encoding is vital in the architecture of modern transformer models. It helps understand the order between tokens in a sequence. Plus, with various methods to choose from, understanding the strengths and weaknesses of each type of encoding will be good. This will be seen in selecting the best approach for different tasks.

Reimagine possibilities and empower innovation with our comprehensive Artificial Intelligence & Machine Learning Training – Register now!

Frequently Asked Questions

AI Tools In Performance Marketing Training

Sine and cosine functions are used in Positional Encoding because they provide a continuous, and periodic representation of position. This periodicity allows the model to learn relationships between tokens at different distances. On the other hand, the distinct frequencies help represent a range of positional offsets.

What is the Difference Between Embedding and Positional Encoding?

Embedding represents the meaning of tokens by mapping them into dense numerical vectors that capture semantic relationships. Words with similar meanings have vectors placed closer in this space. Positional encoding provides information about the order of tokens in a sequence.

What are the Other Resources and Offers Provided by The Knowledge Academy?

The Knowledge Academy takes global learning to new heights, offering over 3,000+ online courses across 490+ locations in 190+ countries. This expansive reach ensures accessibility and convenience for learners worldwide.

Alongside our diverse Online Course Catalogue, encompassing 17 major categories, we go the extra mile by providing a plethora of free educational Online Resources like Blogs, eBooks, Interview Questions and Videos. Tailoring learning experiences further, professionals can unlock greater value through a wide range of special discounts, seasonal deals, and Exclusive Offers.

What is The Knowledge Pass, and How Does it Work?

The Knowledge Academy’s Knowledge Pass, a prepaid voucher, adds another layer of flexibility, allowing course bookings over a 12-month period. Join us on a journey where education knows no bounds.

What are the Related Courses and Blogs Provided by The Knowledge Academy?

The Knowledge Academy offers various Artificial Intelligence & Machine Learning Courses, including the Introduction to Artificial Intelligence Course, Machine Learning Course and the OpenAI Course. These courses cater to different skill levels, providing comprehensive insights into How to Change Language in Google Assistant.

Our Data, Analytics & AI Blogs cover a range of topics related to Positional Encoding, offering valuable resources, best practices, and industry insights. Whether you are a beginner or looking to advance your Data Analytics skills, The Knowledge Academy's diverse courses and informative blogs have got you covered.

Lily Turner is a data science professional with over 10 years of experience in artificial intelligence, machine learning, and big data analytics. Her work bridges academic research and industry innovation, with a focus on solving real-world problems using data-driven approaches. Lily’s content empowers aspiring data scientists to build practical, scalable models using the latest tools and techniques.

View Detail

Upcoming Data, Analytics & AI Resources Batches & Dates

Date

Introduction to AI Course

Introduction to AI Course

Fri 24th Jul 2026

Fri 13th Nov 2026

Top Rated Course

Top Rated Course

If you wish to make any changes to your course, please

If you wish to make any changes to your course, please