We may not have the course you’re looking for. If you enquire or give us a call on +27 800 780004 and speak to our training experts, we may still be able to help with your training requirements.

We ensure quality, budget-alignment, and timely delivery by our expert instructors.

In today's data-driven world, organisations collect information from various places, such as apps, devices, and social media. However, Raw Data isn’t beneficial unless collected, organised, and easily accessible for analysis. That’s where Data Ingestion comes in; it's a key part of managing data.

This blog will discuss the basics of Data Ingestion, covering the different methods, tools, and challenges involved so you can grasp this critical step in the data workflow. So, let’s dive into it without any further ado.

Table of Contents

1) What is Data Ingestion?

2) Why is Data Ingestion Important?

3) Different Types of Data Ingestion

4) Advantages of Data Ingestion

5) The Data Ingestion Process

6) Tools for Data Ingestion

7) Difference Between Data Ingestion and ETL

8) Difference Between Data Ingestion and Data Integration

9) Data Ingestion Framework

10) Data Ingestion Best Practices

11) Conclusion

What is Data Ingestion?

Data Ingestion refers to the process of gathering, importing, and transferring data from various sources into a storage or computing system. It serves as the entry point into the data ecosystem, enabling subsequent analysis, reporting, and decision-making.

As a foundational step in data workflows, Data Ingestion ensures data accessibility and usability. Its methods vary based on processing needs, including real-time streaming, batch processing, and hybrid approaches like Lambda architectures. Each type caters to specific requirements, setting the stage for effective data utilisation.

Why is Data Ingestion Important?

Data Ingestion is the foundational step in processing and deriving value from the vast data businesses collect. A well-executed ingestion process ensures data accuracy and reliability, empowering data teams to deliver impactful analytics. Here's why Data Ingestion is indispensable:

1) Adapting to a Dynamic Data Landscape

Modern businesses rely on diverse data sources with unique formats and structures. A robust ingestion process integrates these sources, providing a complete view of functions, consumers, and market movements. It also adapts to new data sources and increasing data volumes, ensuring scalability and resilience.

2) Enabling Advanced Analytics

Adequate Data Ingestion supports collecting and preparing large datasets for detailed analysis. This capability transforms data into actionable insights, driving problem-solving and informed decision-making.

3) Enhancing Data Quality

The ingestion process incorporates validation, cleansing, and transformation to ensure consistent and accurate data. Standardisation, normalisation, and enrichment improve usability, adding valuable context and depth for analytics.

Different Types of Data Ingestion

Data Ingestion comes in various forms, each tailored to different data processing needs. Understanding these types is crucial for choosing the appropriate approach for specific use cases. Here, we delve into three primary types of Data Ingestion:

1) Real-time Data Ingestion

Real-time Data Ingestion processes facts as they arrive, enabling instantaneous evaluation and decision-making. In this technique, information is gathered and brought in real-time, making it best for programmes that require up-to-the-moment insights. For instance, inventory marketplace statistics, social media feeds, and Internet of Things (IoT) sensor information have a significant advantage over actual-time data ingestion. It surfaces around capturing data in the intervening time of introduction, allowing corporations to reply swiftly to converting conditions.

2) Batch-based Data Ingestion

In contrast to Real-time Data Ingestion, Batch-based Data Ingestion collects and transfers records in predefined sets or batches. This technique is beneficial when actual evaluation isn't always essential or whilst handling historical statistics. It's generally used for periodic data updates like daily income reports or month-to-month financial summaries. Batch-based Data Ingestion is a cost-effective and efficient way to address massive volumes of data without needing real-time processing.

3) Lambda Architecture-based Data Ingestion

The Lambda architecture combines Real-Time Data and batch processing, allowing businesses to leverage both approaches based on specific requirements. It offers the advantages of immediate data processing and the ability to store and analyse historical data. This is especially valuable for applications with varying data processing needs, ensuring flexibility and efficiency.

Each type of Data Ingestion has its unique characteristics and benefits, making them suitable for different use cases. Understanding these distinctions empowers organisations to design data workflows that align with their specific data processing goals.

Ace Your Interview – Start Practicing with These Top Data Entry Interview Questions!

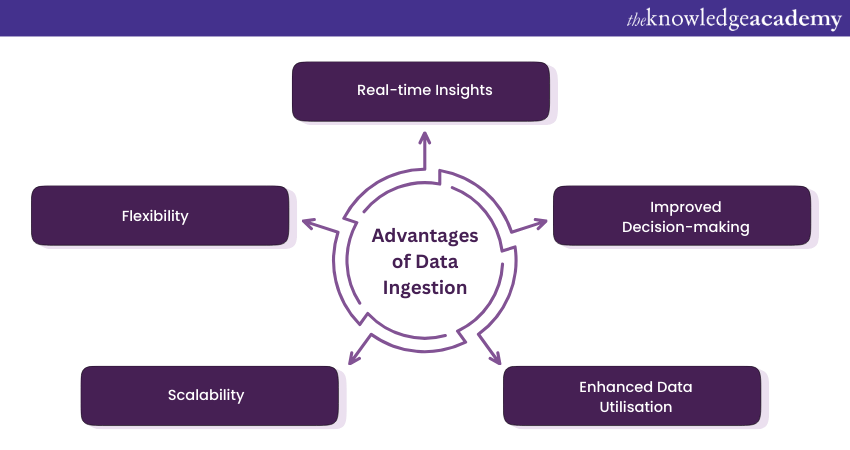

Advantages of Data Ingestion

Data Ingestion plays a pivotal role in modern Data Management, offering a range of advantages that are essential for organisations seeking to harness the full potential of their data. Here are some of the key benefits of effective Data Ingestion:

1) Real-time Insights: One of the most significant advantages of Data Ingestion is the ability to acquire and process data in real time. This enables organisations to gain immediate insights into their operations, customer behaviour, and other critical metrics. Real-time Data Ingestion is invaluable for decision-making, especially in dynamic industries like finance, e-commerce, and cybersecurity.

2) Improved Decision-making: By providing up-to-date and relevant data, Data Ingestion enhances the decision-making process. Decision-makers can rely on accurate, current information to make informed choices, driving efficiency and effectiveness in operations and strategies.

3) Enhanced Data Utilisation: Effective Data Ingestion ensures that data is available for analysis, reporting, and other data-driven activities. This maximises the utility of data, allowing organisations to derive value from it in a variety of ways, from improving customer experiences to optimising business processes.

4) Scalability: Data Ingestion processes can be designed to scale seamlessly with growing data volumes. Whether it's a sudden influx of data or gradual growth, the right Data Ingestion system can adapt to handle the load, ensuring that Data Management remains efficient.

5) Flexibility: Different types of Data Ingestion (real-time, batch-based, and Lambda architecture-based) offer flexibility in how data is collected and processed. Organisations can choose the approach that best fits their data processing needs, ensuring that they can adapt to evolving requirements.

Effective Data Ingestion is a linchpin in data-driven organisations, facilitating better decision-making, improving operations, and maximising the potential of data assets. It's a critical component of modern data ecosystems that empowers businesses to thrive in a data-rich world.

The Data Ingestion Process

The Data Ingestion process, designed and managed by a Data Integration Architect, includes collecting raw data from various sources and preparing it for analysis. This multistep pipeline ensures data is accessible, accurate, consistent, and usable for business intelligence, supporting SQL-based analytics and other processing workloads. The key stages include:

1) Data Discovery

Identify and understand the available data across the organisation, including its structure, quality, and potential uses. This exploratory phase establishes the foundation for successful ingestion.

2) Data Acquisition

Collect data from diverse sources such as structured databases, APIs, or unstructured formats like spreadsheets. The process ensures data integrity while handling varying formats, large volumes, and complexity.

3) Data Validation

Check for errors, inconsistencies, and missing values to ensure data accuracy and reliability. Techniques like type, range, and uniqueness validation prepare data for further processing.

4) Data Transformation

Convert validated data into an analysis-ready format. Processes such as normalisation, aggregation, and standardisation ensure consistency and make the data easier to understand.

5) Data Loading

Load the transformed data into a designated storage system, such as a data warehouse or data lake. Depending on requirements, loading can be performed in batches or real-time, marking the pipeline's completion and enabling analysis and reporting.

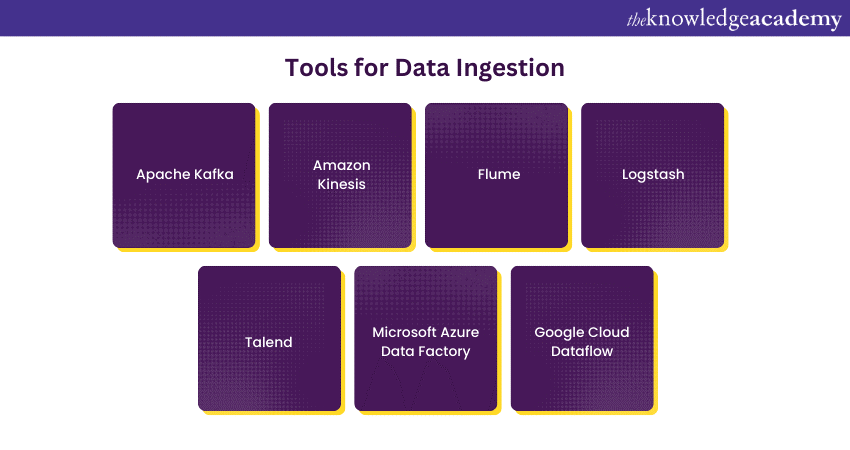

Tools for Data Ingestion

Effective Data Ingestion often requires the use of specialised tools and platforms to streamline the process and ensure data is collected, transformed, and made available for analysis. These tools come in various forms, each designed to meet specific Data Ingestion requirements. Here are some popular tools for Data Ingestion:

1) Apache Kafka: A distributed streaming platform that excels in ingesting, processing, and distributing high-throughput, real-time data streams. It's widely used for Data Ingestion in event-driven architectures.

2) Amazon Kinesis: A cloud-based platform that simplifies real-time data streaming and ingestion on Amazon Web Services (AWS). It's ideal for applications that require real-time analytics.

3) Flume: An open-source tool by Apache designed for efficiently collecting, aggregating, and moving large volumes of log data or event data from various sources to a centralised repository.

4) Logstash: Part of the Elastic Stack, Logstash can be seamlessly integrated with Elasticsearch and MongoDB to enhance data ingestion and transformation for more efficient data analysis. It's commonly used in combination with Elasticsearch and Kibana for log analysis.

5) Talend: An open-sourceData Integration tool that offers a wide range of Data Ingestion and transformation features. It's known for its user-friendly interface and extensive connectivity options.

6) Microsoft Azure Data Factory: A cloud-based Data Integration service on Microsoft Azure that allows users to create data-driven workflows for Data Ingestion, transformation, and analytics.

7) Google Cloud Dataflow: Part of the Google Cloud Platform, Dataflow is a fully managed stream and batch data processing service that facilitates Data Ingestion and processing at scale.

The choice of Data Ingestion tool depends on the specific needs and the existing data infrastructure of an organisation. Many tools offer features like data transformation, error handling, and support for various data formats to make the Data Ingestion process as efficient and flexible as possible.

When selecting a tool, it's essential to consider factors like scalability, ease of use, and compatibility with the organisation's existing technology stack.

Difference Between Data Ingestion and ETL

Data Ingestion and ETL (Extract, Transform, Load) are both essential processes in the world of Data Management and analytics. While they share some common objectives, they serve distinct purposes and exhibit notable differences.

Data Ingestion primarily focuses on collecting and transporting raw data from various sources to a centralised storage or processing system. It ensures that data is available for analysis.

ETL is a more comprehensive process that involves extracting data from source systems, transforming it to meet specific business requirements, and loading it into a target data warehouse or repository.

Difference Between Data Ingestion and Data Integration

Data Ingestion and Big Data Integration are critical processes in managing and utilising data effectively. While they are related, they serve different purposes and involve distinct actions.

Data Ingestion focuses on the initial step of collecting and importing raw data from various sources into a central repository or processing system. Its primary goal is to make data available for analysis.

Data Integration is a more comprehensive process that involves combining, enriching, and transforming data from various sources to create a unified, holistic view of data. Its primary goal is to provide a consolidated and meaningful dataset.

Seamlessly merge the data world with our Data Integration and Big Data Using Talend – Sign up now!

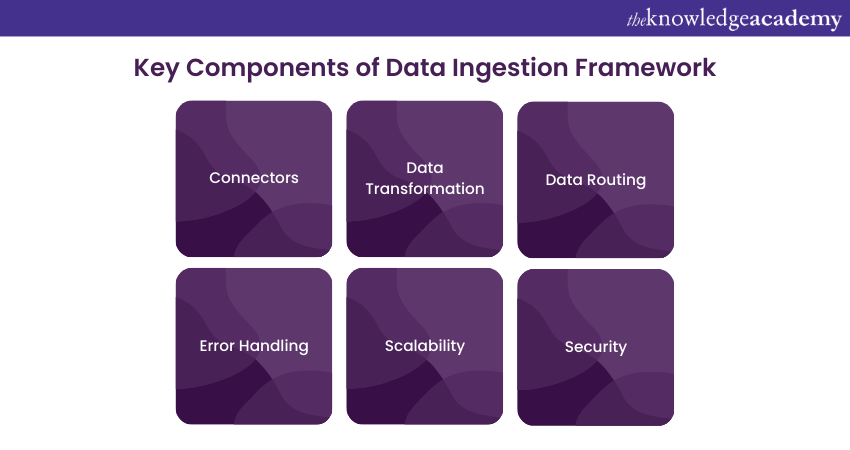

Data Ingestion Framework

A Data Ingestion framework is a structured approach or set of tools that simplifies and enhances the process of collecting, transporting, and making data available for analysis. It serves as the backbone for efficient Data Ingestion, ensuring that data is seamlessly and securely moved from source systems to data storage or processing platforms. Let’s explore the key components of a Data Ingestion Framework:

1) Connectors: These enable the framework to interact with various data sources, including databases, cloud services, applications, and more.

2) Data Transformation: The framework may include features for basic data transformation, such as format conversion, to ensure data compatibility.

3) Data Routing: It provides rules and mechanisms for directing data to the appropriate destinations or repositories based on predefined criteria.

4) Error Handling: Robust frameworks include error detection and recovery mechanisms to manage issues that may arise during Data Ingestion.

5) Scalability: The framework should be capable of handling data at scale to accommodate growing data volumes.

6) Security: Security measures like encryption and access controls are integral to protect data during ingestion.

Popular Data Ingestion frameworks include Apache Nifi, Apache Kafka, and Amazon Kinesis. The choice of framework depends on an organisation's specific Data Ingestion needs, scalability requirements, and existing technology stack. These frameworks streamline Data Ingestion processes, ensuring that data is efficiently collected and ready for analysis.

Harness the power of Big Data analysis with our Big Data Analysis – Sign up today!

Data Ingestion Best Practices

Efficient Data Ingestion is crucial for accurate and timely Data Analysis. To ensure a smooth and reliable Data Ingestion process, consider the following best practices:

1) Data Source Profiling: Before initiating Data Ingestion, thoroughly profile and understand your data sources. This includes examining the data structure, formats, quality, and potential data anomalies. A deep understanding of your data sources ensures a more efficient and successful Data Ingestion process.

2) Data Validation and Cleaning: Implement data validation and cleaning routines to maintain Data Quality during ingestion. This includes checks for missing values, data type inconsistencies, and duplicate entries. By ensuring data integrity from the start, you minimise the risk of introducing errors into your Data Pipeline.

3) Automated Error Handling: Develop automated error detection and handling mechanisms to promptly address any issues that may arise during Data Ingestion. Implement monitoring systems, logging, and alerts to identify and respond to errors, ensuring the continuity of data flow.

4) Protects Data: Prioritise Data Security throughout the ingestion process. Use encryption to protect sensitive data during transfer and implement secure access controls. A strong focus on Data Security prevents data breaches and unauthorised access.

5) MetaData Management: Maintain detailed metadata records related to ingested data. Metadata includes information about data sources, data lineage, and transformations applied during the ingestion process. Comprehensive MetaData Management facilitates data governance, lineage tracking, and auditing.

Following these best practices ensures a smooth and reliable Data Ingestion process, laying the foundation for accurate and meaningful Data Analysis.

Download the Amazon Kinesis Developer Guide now and enhance your cloud application development!

Conclusion

Data Ingestion is the cornerstone of modern data-driven strategies, ensuring raw data from diverse sources is prepared for analysis. By implementing a robust ingestion process, businesses can adapt to dynamic data landscapes, enable advanced analytics, and enhance data quality. For real-time insights or comprehensive batch processing, mastering Data Ingestion empowers organisations to unlock actionable intelligence and drive informed decisions.

Elevate your data mastery with our Big Data and Analytics Training – Sign up now!

Frequently Asked Questions

What is Data Ingestion vs ETL?

Data Ingestion concerns gathering raw data from various origins and preparing it for use. In contrast, Extract, Transform, Load (ETL) consists of extracting data, converting it into an appropriate format, and loading it into storage systems.

What are the two Main Types of Data Ingestion?

The two major types of Data Ingestion are:

1) Batch Ingestion, where data is gathered and processed in breaks and

2) Real-time Ingestion, where data is processed immediately as it is generated, enables timely insights and actions.

What are the Other Resources and Offers Provided by The Knowledge Academy?

The Knowledge Academy takes global learning to new heights, offering over 3,000+ online courses across 490+ locations in 190+ countries. This expansive reach ensures accessibility and convenience for learners worldwide.

Alongside our diverse Online Course Catalogue, encompassing 17 major categories, we go the extra mile by providing a plethora of free educational Online Resources like Blogs, eBooks, Interview Questions and Videos. Tailoring learning experiences further, professionals can unlock greater value through a wide range of special discounts, seasonal deals, and Exclusive Offers.

What is The Knowledge Pass, and How Does it Work?

The Knowledge Academy’s Knowledge Pass, a prepaid voucher, adds another layer of flexibility, allowing course bookings over a 12-month period. Join us on a journey where education knows no bounds.

What are the Related Courses and Blogs Provided by The Knowledge Academy?

The Knowledge Academy offers various Big Data & Analytics Training, including Advanced Data Analytics Course, Certified Artificial Intelligence For Data Analysts Training, and Data Analytics With R. These courses cater to different skill levels, providing comprehensive insights into Informatica Cloud.

Our Data, Analytics & AI Blogs cover a range of topics related to AI, offering valuable resources, best practices, and industry insights. Whether you are a beginner or looking to advance your AI knowledge, The Knowledge Academy's diverse courses and informative blogs have got you covered.

Lily Turner is a data science professional with over 10 years of experience in artificial intelligence, machine learning, and big data analytics. Her work bridges academic research and industry innovation, with a focus on solving real-world problems using data-driven approaches. Lily’s content empowers aspiring data scientists to build practical, scalable models using the latest tools and techniques.

View Detail

Upcoming Data, Analytics & AI Resources Batches & Dates

Date

Hadoop Big Data Certification

Hadoop Big Data Certification

Thu 10th Sep 2026

Thu 10th Dec 2026

Top Rated Course

Top Rated Course

If you wish to make any changes to your course, please

If you wish to make any changes to your course, please