Who should attend this Apache Kafka Training Course?

The Apache Kafka Course is designed for a wide range of professionals seeking to enhance their knowledge and skills in working with Apache Kafka. This Apache Kafka Certification Training can benefit a wide range of professionals, including:

- Data Analysts

- Data Engineers

- Software Developers

- Database Administrators

- IT Managers

- Technical Managers

- Application Architects

Prerequisites of the Apache Kafka Training Course

There are no formal prerequisites for this Apache Kafka Course. However, prior knowledge of Java programming would be beneficial for a smoother learning experience.

Apache Kafka Training Course Overview

Apache Kafka is a real-time distributed event streaming platform and is a vital component of modern data architectures. It enables organisations to process, analyse, and transport data in a scalable, fault-tolerant manner. The significance of Kafka's cannot be overstated. It's the backbone of real-time data processing, making it essential for businesses striving to stay competitive in an ever-evolving landscape.

Proficiency in Apache Kafka is paramount in the age of big data and real-time analytics. Data engineers, developers, and data architects aiming to master Kafka unlock the potential to design robust, scalable, and fault-tolerant systems. Embracing this Apache Kafka Course empowers professionals to navigate the complexities of modern data integration, making them invaluable assets to their organisations.

This intensive 2-day Apache Kafka Training is designed to provide delegates with hands-on experience in Apache Kafka. Delegates will gain practical skills in setting up Kafka clusters, understanding its architecture, and implementing end-to-end data pipelines. They will learn how to optimise Kafka for their specific use cases and troubleshoot common issues, ensuring their organisations can leverage Kafka's full potential effectively.

Apache Kafka Course Objectives

- To understand Kafka fundamentals, including topics, partitions, and replication

- To master Kafka architecture, exploring producers, consumers, and brokers

- To implement fault tolerance and high availability in Kafka clusters

- To delve into advanced topics like Kafka Connect and Kafka Streams

- To learn best practices for configuration and performance tuning

- To explore security mechanisms, ensuring data integrity and privacy

- To design real-time data processing pipelines using Kafka

- To troubleshoot common issues and optimise Kafka clusters for efficiency

After completing this course, delegates receive a prestigious certification. These Apache Kafka Courses validate their expertise in Kafka's architecture, administration, and application, making them highly sought-after professionals in the competitive tech industry.

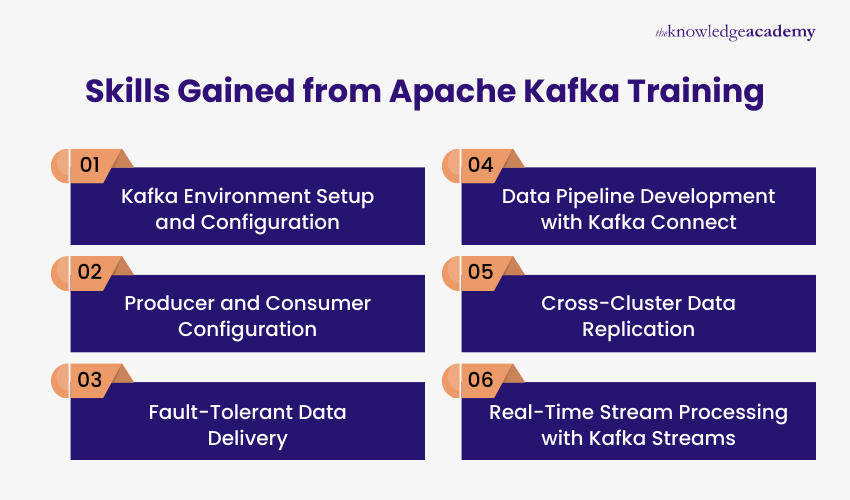

Skills Gained from Apache Kafka Training

Apache Kafka Training in in Belgrade by The Knowledge Academy, the global training provider, explores how to manage real-time data streams using Kafka technologies. The course focuses on building event-driven data pipelines, configuring components, and maintaining reliable data transfer across systems.

Professionals can explore the following key skills:

- Kafka Environment Setup and Configuration: Set up Kafka environments, configure clusters, and manage essential system settings to support stable and reliable operations.

- Producer and Consumer Configuration: Configure Kafka producers and consumers to publish, subscribe, and process streaming data efficiently.

- Fault-Tolerant Data Delivery: Implement delivery guarantees and replication strategies to ensure reliable data transmission and maintain data consistency.

- Data Pipeline Development with Kafka Connect: Build data pipelines using Kafka Connect to integrate external systems and enable seamless data movement across applications.

- Cross-Cluster Data Replication: Apply mirroring techniques such as MirrorMaker to replicate data between Kafka clusters and support scalable architectures.

- Real-Time Stream Processing with Kafka Streams: Process event-driven data streams to transform, filter, and analyse information continuously within streaming applications.

If you miss out, enquire to get yourself on the waiting list for the next day!

If you miss out, enquire to get yourself on the waiting list for the next day!

If you wish to make any changes to your course, please

If you wish to make any changes to your course, please