We may not have the course you’re looking for. If you enquire or give us a call on +971 8000311193 and speak to our training experts, we may still be able to help with your training requirements.

We ensure quality, budget-alignment, and timely delivery by our expert instructors.

In the age of exponential data growth, harnessing and processing vast datasets efficiently is crucial. Enter Resilient Distributed Datasets (RDDs)—a groundbreaking concept transforming distributed data processing. As the backbone of Apache Spark, RDDs bring fault-tolerance, parallelism, and scalability to big data workflows.

This blog will explore RDDs' defining features, real-world applications, and their role in revolutionising data analytics. Ready to unravel the power of RDDs? Let’s dive in and discover how they’re reshaping the future of data processing.

Table of Contents

1) What is a Resilient Distributed Dataset?

2) Characteristics of RDD

3) How Does RDD Store Data?

4) Use Cases of RDD

5) Advantages of RDD

6) Challenges of RDD

7) Conclusion

What is a Resilient Distributed Dataset?

A Resilient Distributed Dataset (RDD) is an essential data structure in Apache Spark that is designed for distributed and parallel data processing. It represents a fault-tolerant collection of elements that can be processed across a cluster. RDDs are immutable, meaning once created, they cannot be modified; instead, transformations create new RDDs. Operations on RDDs are lazily evaluated, meaning computation occurs only when an action like collecting data or saving results is performed.

They are distributed across nodes in a cluster, enabling parallelism and scalability. RDDs support various transformations and actions for complex data workflows and are highly resilient to failures through lineage information, which helps recompute lost partitions. While versatile, they may be less efficient than newer Spark abstractions.

Characteristics of RDD

Resilient Distributed Datasets have some key characteristics that make them unique and effective for big data processing. Let's explore these characteristics in this section:

a) Resilience: RDDs are resilient because they can recover data lost during processing due to hardware failures. They do this by keeping a record of the operations applied to the data.

b) Distribution: RDDs are distributed across multiple computers in a cluster, which means that they can handle large amounts of data by breaking it into smaller units that can be processed in parallel.

c) Immutability: RDDs are immutable, meaning once created, their data cannot be changed. Instead, any operation on an RDD creates a new one, ensuring data consistency.

d) In-memory Computation: RDDs can store data in memory, allowing for faster data processing compared to traditional disk-based systems.

e) Transformations and Actions: RDDs support two types of operations. Transformations create new RDDs by applying functions to existing data, while actions return results or save data.

f) Lazy Evaluation: RDDs use lazy evaluation, which means they only perform transformations once an action is called

g) Parallel Processing: RDDs can be divided into smaller partitions and processed in parallel on different computers, making them highly efficient for parallel data processing.

Unlock your potential with our Advanced Data Analytics Course— sign up today!

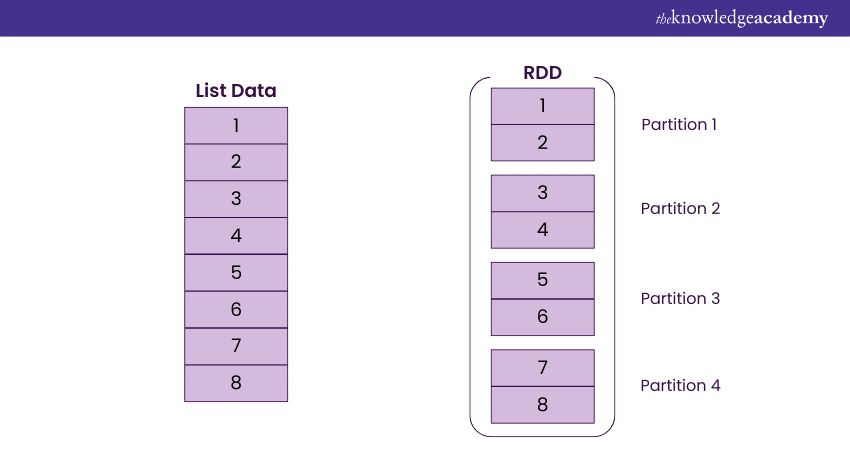

How Does RDD Store Data?

A Resilient Distributed Dataset (RDD) is an immutable data structure in Apache Spark that stores data in a read-only format. Operations on an RDD create new RDDs without altering the original data.

RDDs primarily reside in memory through caching, allowing quick access to data and minimising disk I/O. The data can be recomputed or offloaded to persistent storage if it exceeds available memory. This caching mechanism significantly reduces disk overhead and enhances performance

Data in RDDs is distributed across multiple partitions, enabling parallel processing and ensuring data availability even in case of node failures. RDD persistence is optimised using cache() or persist() methods, allowing different storage levels. The fault-tolerant design enables lost partitions to be recreated using lineage information.

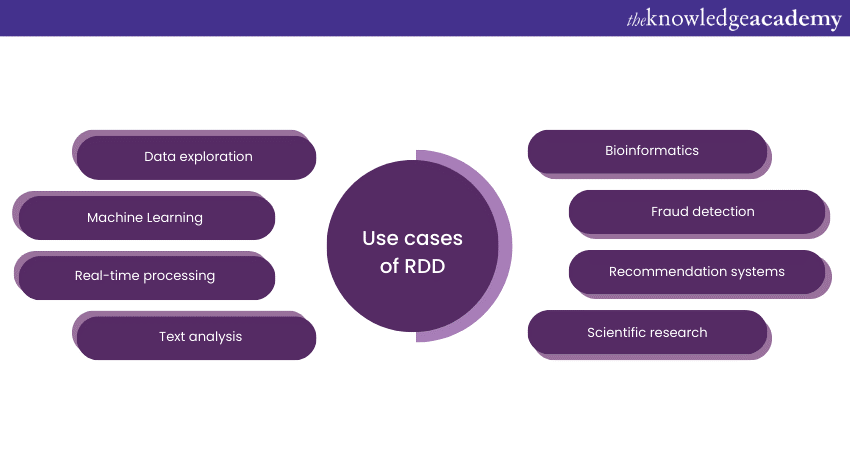

Use Cases of RDD

Resilient Distributed Datasets are versatile data structures used in various real-world scenarios. Here are some simplified use cases of RDDs:

a) Data Exploration: RDDs are handy for exploring large datasets, allowing data scientists to analyse and extract insights from vast volumes of information.

b) Machine Learning: RDDs play a crucial role in big data Machine Learning. They enable the parallel processing of data required for training and deploying Machine Learning models at scale.

c) Real-time Processing: When dealing with continuous streams of data in real-time applications, such as social media updates or sensor data, RDDs facilitate the efficient handling of incoming data.

d) Text Analysis: For natural language processing tasks, like sentiment analysis or language translation, RDDs can process and transform text data in parallel.

e) Bioinformatics: In genomics research, where enormous datasets are generated from DNA sequencing, RDDs assist in the analysis and interpretation of genetic data.

f) Fraud Detection: In the finance industry, RDDs can be employed to process large volumes of transactions and identify suspicious patterns indicative of fraud.

g) Recommendation Systems: E-commerce websites and streaming platforms use RDDs to analyse user behaviour and suggest products or content based on their preferences.

h) Scientific Research: In various scientific fields, RDDs help process experimental data, simulations, or observations on a large scale.

Unlock the power of big data with our Big Data Architecture Training. Dive into the future of insights and innovation.

Advantages of RDD

Resilient Distributed Datasets (RDDs) have several advantages in plain language:

a) Data Safety: RDDs are good at keeping your data safe. If a computer in your data processing group fails, RDDs can still give you your data without any loss or damage.

b) Fast Data Processing: RDDs can help you process data quickly because they keep data in the computer's memory, which is much faster than reading it from a disk.

c) Custom Data Work: With RDDs, you can do exactly the kind of work you want on your data. You're not limited to fixed methods or operations.

d) Data Flexibility: RDDs can work with different types of data. It doesn't matter if your data is neatly organised or messy; RDDs can handle it.

e) Big Scalability: You can make RDDs work on big data just as easily as small data. You can add more computers to help them work faster when you need to.

f) High Compatibility: RDDs work smoothly with other parts of Apache Spark, a big data framework. This makes it easy to build big data applications.

Challenges of RDD

RDDs are a powerful data processing tool, but they do come with certain challenges and limitations. These challenges are discussed below:

a) Complexity of Operations: RDDs require a deeper understanding of data processing concepts, which can make them challenging for newcomers to big data processing.

b) Performance Overheads: The fact that RDDs can't be changed once they're created means that every time you want to do something different with your data, you have to create a completely new RDD.

c) Memory Usage: While RDDs promote in-memory computation for speed, they can consume a large amount of memory when handling large datasets, potentially leading to memory-related issues.

d) Data Serialisation: Serialisation of data when passing it between nodes in a cluster can be a bottleneck, affecting performance.

e) Narrow and Wide Transformations: Understanding and using the right types of transformations (narrow vs. wide) can be challenging, as wide transformations require shuffling data across partitions, which can be resource intensive.

f) Lack of Optimisation: RDDs do not benefit from query optimisation techniques used in relational databases. This means that query performance may not be as efficient for structured data.

Conclusion

Resilient Distributed Datasets (RDDs) are more than just a feature of Apache Spark—they’re the lifeblood of modern distributed data processing. With their fault-tolerant design, blazing in-memory speed, and seamless scalability, RDDs turn the chaos of big data into actionable insights. Spark your journey into the future of big data with RDDs leading the way!

Transform Data into Insights by joining our courses on Big Data and Analytics Training. Join now!

Frequently Asked Questions

What is the Difference Between a Resilient Distributed Dataset and DataFrame?

RDDs offer low-level, unoptimised data processing, while DataFrames provide high-level, optimised operations with schema support for structured data, making them faster and easier for SQL-like workflows.

What is the key Difference Between RDD and DSM?

RDDs are immutable and fault-tolerant, supporting parallel data processing, whereas Distributed Shared Memory (DSM) allows mutable, shared states across nodes, requiring explicit synchronisation and lacking inherent fault tolerance.

What are the Other Resources and Offers Provided by The Knowledge Academy?

The Knowledge Academy takes global learning to new heights, offering over 3,000+ online courses across 490+ locations in 190+ countries. This expansive reach ensures accessibility and convenience for learners worldwide.

Alongside our diverse Online Course Catalogue, encompassing 17 major categories, we go the extra mile by providing a plethora of free educational Online Resources like Blogs, eBooks, Interview Questions and Videos. Tailoring learning experiences further, professionals can unlock greater value through a wide range of special discounts, seasonal deals, and Exclusive Offers.

What is The Knowledge Pass, and How Does it Work?

The Knowledge Academy’s Knowledge Pass, a prepaid voucher, adds another layer of flexibility, allowing course bookings over a 12-month period. Join us on a journey where education knows no bounds.

What are the Related Courses and Blogs Provided by The Knowledge Academy?

The Knowledge Academy offers various Big Data & Analytics Training, including Apache Spark Training, Certified Artificial Intelligence For Data Analysts Training, and Data Analytics With R. These courses cater to different skill levels, providing comprehensive insights into Informatica Cloud.

Our Data, Analytics & AI Blogs cover a range of topics related to AI, offering valuable resources, best practices, and industry insights. Whether you are a beginner or looking to advance your Apache Spark skills, The Knowledge Academy's diverse courses and informative blogs have got you covered.

Lily Turner is a data science professional with over 10 years of experience in artificial intelligence, machine learning, and big data analytics. Her work bridges academic research and industry innovation, with a focus on solving real-world problems using data-driven approaches. Lily’s content empowers aspiring data scientists to build practical, scalable models using the latest tools and techniques.

View Detail

Upcoming Data, Analytics & AI Resources Batches & Dates

Date

Apache Spark Training

Apache Spark Training

Thu 13th Aug 2026

Thu 12th Nov 2026

Top Rated Course

Top Rated Course

If you wish to make any changes to your course, please

If you wish to make any changes to your course, please