We may not have the course you’re looking for. If you enquire or give us a call on +36 18508731 and speak to our training experts, we may still be able to help with your training requirements.

We ensure quality, budget-alignment, and timely delivery by our expert instructors.

One of the common phrases that echoes through the digital world is 'Data is the new gold'. While that's the undeniable truth, raw data alone is nothing more than noise. This is where Data Processing comes in, which is the art of transforming scattered bits into meaningful insights. Data Processing plays a vital role in every industry today, from calculating sales figures to powering AI systems. But how does it exactly work?

This blog takes a crack at it and explores What is Data Processing. It breaks down the steps, types, real-world uses of Data Processing, and more. So read on and learn how Data Processing helps businesses grow, how it makes decisions smarter, and how information becomes more than just numbers!

Table of Contents

1) What is Data Processing?

2) Importance of Data Processing

3) 6 Steps of the Data Processing

4) Different Types of Data Processing

5) Data Processing Technologies & Tools

6) Examples of Data Processing

7) Challenges of Data Processing

8) The Future of Data Processing

9) What are the Three Main Stages of Information Processing?

10) What is Data Processing in GDPR?

11) Conclusion

What is Data Processing?

Data Processing means collecting and working with data to turn it into useful information. It includes steps like collecting, entering, checking, sorting, and processing data and then sharing the results. These steps can be done by hand or, more often, by computers and software. The aim is to change raw, messy data into a clear format that’s easy to understand and use for making decisions.

Today, Data Processing is important in many areas like business, science, healthcare, and education. It helps organisations study trends, create reports, and make smart choices using accurate and up-to-date information. Methods like batch, real-time, and distributed processing are used based on how fast the data is needed.

Importance of Data Processing

Data Processing is crucial for companies that rely on accurate and timely information to make good decisions. When done correctly, it offers several key benefits:

1) Better Decisions: Raw data isn’t beneficial on its own. By processing it into clear information, businesses can make smarter choices. A strong Data Management strategy also helps maintain data quality.

2) Saves Time and Money: Setting up data systems may cost money, but they save time and improve efficiency. This allows companies to spend more time on growth than manually handling data.

3) Improved Customer Experience: By understanding customer habits and preferences through data, companies can tailor their products and services to meet customer needs, leading to higher satisfaction and loyalty.

4) Stronger Market Position: Businesses that are good at Data Processing using smart strategies and tools often gain an edge over their competitors.

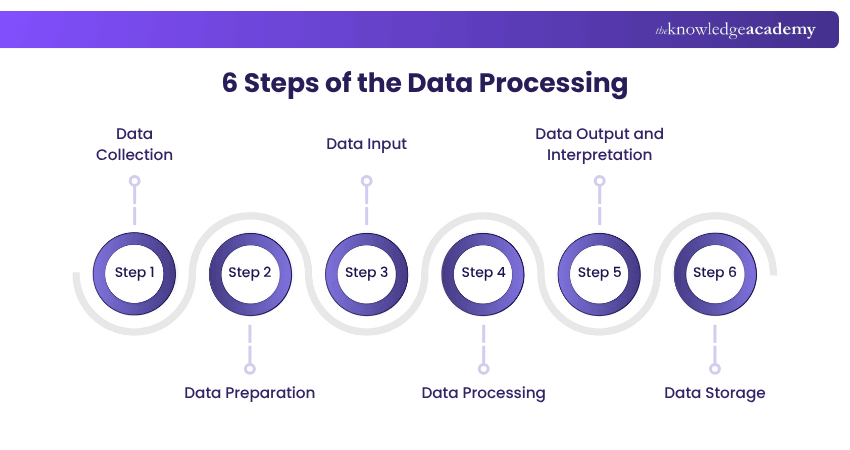

6 Steps of the Data Processing

Data processing involves several key stages in converting raw data into useful information. Here's a breakdown of these stages:

Step 1: Data Collection

Data Collection is the first step. Data is gathered from sources like data lakes and data warehouses. It's crucial to ensure these sources are reliable to collect high-quality data for later use.

Step 2: Data Preparation

Once collected, data enters the preparation stage, often called "pre-processing." Here, raw data is cleaned and organised. Errors are checked, and bad data (redundant, incomplete, or incorrect) is eliminated to create high-quality data for business intelligence.

Step 3: Data Input

Clean data is then entered into its destination, such as a CRM like Salesforce or a data warehouse like Redshift. This stage translates raw data into a usable format.

Step 4: Data Processing

During this stage, the data inputted in the previous step is processed for interpretation. Machine learning algorithms are used, though the process may vary depending on the data source and its intended use (e.g., advertising patterns, medical diagnosis, customer needs).

Step 5: Data Output and Interpretation

This stage makes data usable for non-data Scientists. Data is translated into readable formats like graphs, videos, images, or text. It can now be used by company members for Data Analytics Projects.

Step 6: Data Storage

The final stage is storing the processed data for future use. Properly stored data can be easily accessed when needed and is crucial for compliance with Data protection laws like GDPR remain crucial, and incorporating GDPR Training helps organisations better manage how data is handled. While some data is used immediately, much of it serves future purposes.

Elevate your career with our Advanced Data Science Certification Course - master cutting-edge skills and stand out in the field!

Different Types of Data Processing

Data Processing involves various methods to convert raw data into useful information. Each type suits different scenarios and needs.

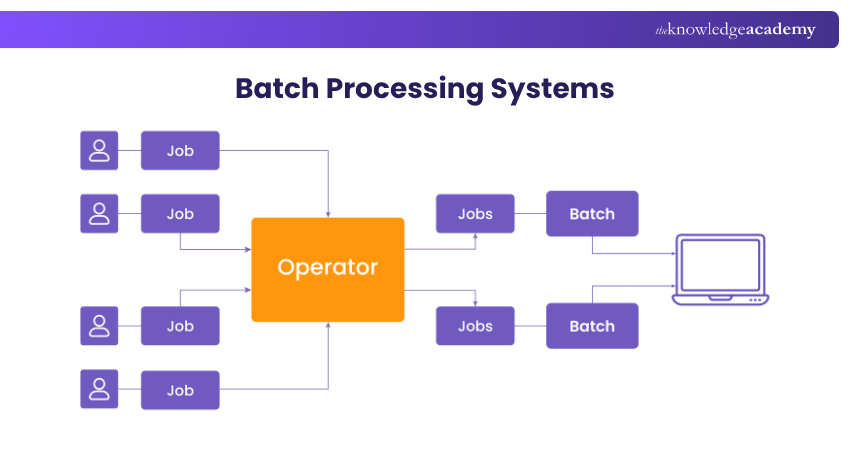

Batch Processing

Handles large volumes of data at set times, ideal for non-urgent tasks. This method aggregates data and processes it during off-peak hours to minimise impact on daily operations.

Example: Financial institutions process checks and transactions overnight, updating account balances in one go.

Real-time Processing

Processes data immediately upon receipt, providing instant feedback. Crucial for tasks where delays are unacceptable, ensuring timely decisions and responses.

Example: GPS navigation systems offer real-time turn-by-turn directions based on live traffic and road conditions.

Multiprocessing

Uses multiple CPUs to handle tasks simultaneously, improving efficiency, especially for complex computations that can be split into smaller tasks.

Example: Movie production uses multiprocessing for rendering 3D animations, speeding up project completion and improving visual quality.

Online Processing

Enables interactive Data Processing over a network with continuous input and output for immediate responses. Essential for e-commerce and online services.

Example: Online banking systems process financial transactions in real-time, allowing instant fund transfers and account updates.

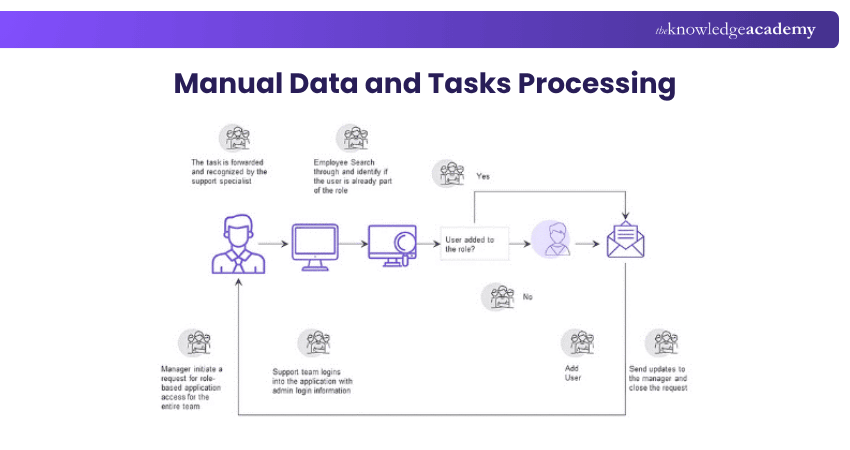

Manual Data Processing

Requires human intervention for data input, processing, and output, typically without electronic devices. Prone to errors but was common before computers.

Example: Libraries used to catalogue books manually, recording details by hand for inventory purposes.

Cloud Computing

Provides computing resources like servers and storage over the internet, offering flexibility and scalability without physical infrastructure.

Example: Small businesses use cloud computing for data storage and software services, allowing easy scaling as the business grows.

Mechanical Data Processing

Uses machines or equipment for data tasks, a prevalent method before the digital era, involving tangible devices to input, process, and output data.

Example: Early 20th-century voting machines tallied votes by pulling levers, simplifying counting and reducing errors.

Distributed Processing

Spreads computational tasks across multiple devices to improve speed and reliability, handling large-scale tasks more efficiently than a single computer.

Example: Video streaming services store videos on multiple servers for smooth playback and quick access worldwide.

Automatic Data Processing

It uses software to automate routine tasks, reduce manual input, and increase efficiency by streamlining processes and minimising errors.

Example: Automated billing systems in telecommunications calculate and send monthly charges to customers, streamlining operations and reducing errors.

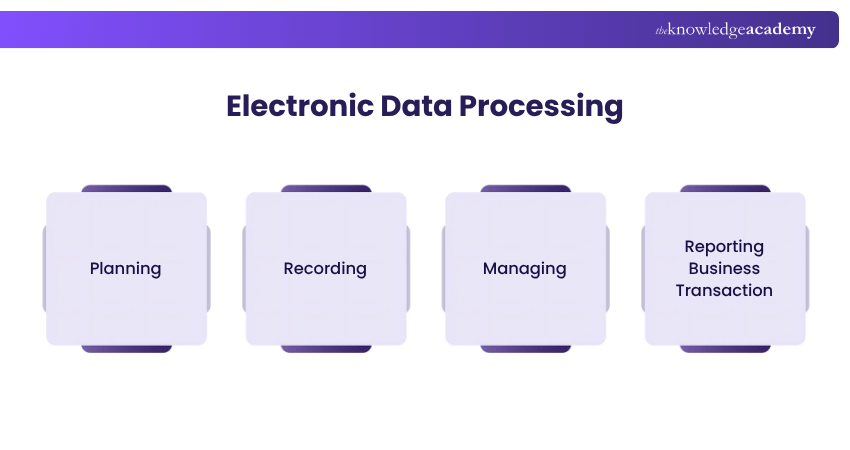

Electronic Data Processing

Uses computers and digital technology for efficient data handling, allowing rapid processing, vast storage, and easy retrieval.

Example: Retail checkouts use barcode scans to update inventory and process sales quickly.

Tame the messy jungle of data with Pandas! Sign up for our Pandas for Data Analysis Training and unlock powerful data insights!

Data Processing Technologies and Tools

Data Warehouses, Artificial Intelligence & Machine Learning, and Cloud Technology are among the best technologies and tools that play vital roles in extracting valuable insights from raw data. Let's explore them in detail:

Databases and Data Warehouses

a) Databases store structured data and are essential for Data Processing tasks.

b) They allow quick querying, updating, and retrieving of information.

c) Common examples include MySQL, PostgreSQL, and Microsoft SQL Server.

d) Data warehouses collect data from multiple sources in one place.

e) They are built to analyse large datasets and support business decisions.

f) Data warehouses often use technologies like Hadoop, Apache Spark, and data lakes.

Artificial Intelligence & Machine Learning

a) Artificial intelligence (AI) and machine learning (ML) are key in modern Data Processing.

b) They help organisations find patterns and make predictions using data.

c) Python, R, and SAS are popular languages used in ML for Data Processing.

d) Supervised learning uses labelled data to train models for making predictions.

e) Unsupervised learning finds patterns in unlabelled data, like clustering and dimensionality reduction.

f) Reinforcement learning improves decisions through continuous feedback from the environment.

g) These ML methods have led to significant advances in speech recognition and medical diagnosis.

Cloud Technology & Data Analytics Platforms

a) Cloud technology has changed how businesses manage Data Processing by offering scalable and cost-effective solutions.

b) It allows access to Data Processing tools from anywhere, removing the need for on-premise hardware.

c) Amazon Web Services (AWS), Microsoft Azure and Google Cloud Platform are top Cloud providers.

d) These platforms help organisations set up Data Analytics systems quickly and efficiently.

e) Cloud platforms support data storage in systems like data lakes.

f) They enable large-scale Data Processing tasks such as ETL workflows and analytics.

g) Data orchestration tools manage and coordinate processing tasks across various systems.

h) Visualisation tools present processed data clearly for better decision-making.

Decoding uncertainty is the key to discovering unique insights! Dive into our Probability and Statistics for Data Science and turn data into successful decisions!

Examples of Data Processing

Data Processing is crucial in many industries and applications, showcasing its versatility and essential role in digital operations. Here are some examples highlighting its importance:

Digital Marketing

Digital marketing companies use demographic data to create targeted marketing campaigns. By processing this data effectively, they identify target audiences, understand their preferences, and optimise marketing efforts for better engagement and conversion rates.

Financial Transactions

Data Processing is essential for financial transactions like bank transfers, online payments, and stock trades.

Navigation Systems

Real-time Data Processing is vital for GPS navigation systems, which process satellite data instantly to provide turn-by-turn directions.

Supply Chain Optimisation

Data from various supply chain points is processed to identify bottlenecks, predict demand, and optimise production logistics.

Weather Forecasting

Weather forecasting relies on multiprocessing (parallel processing), where data from satellites and weather stations is processed simultaneously. This enables rapid analysis of complex meteorological data to accurately predict weather conditions.

Crack the code of hidden insights with cutting-edge Data Mining skills! Sign up for our Data Mining Training now!

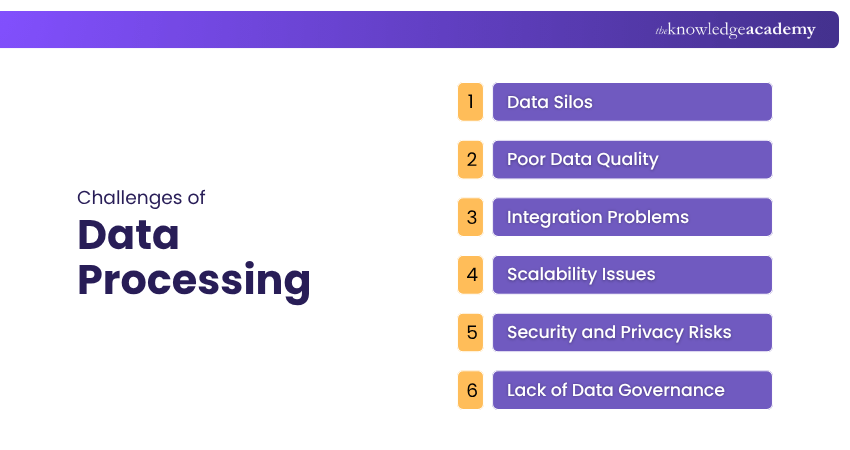

Challenges of Data Processing

Data Processing is vital for businesses aiming to harness their data effectively. However, several challenges can hinder this process:

1) Data Silos: When teams work separately and don’t share data, it creates isolated data sets. This makes it hard to get a complete picture of the business and can slow down analysis and decision-making.

2) Poor Data Quality: Mistakes like duplicate, outdated, or incorrect data can happen when there’s no standard way to collect or clean data. This leads to wrong insights and poor decisions.

3) Integration Problems: Data often comes from different places and in different formats. If these can’t be connected easily, getting useful insights from all of them becomes difficult.

4) Scalability Issues: As the amount of data grows, older systems may be unable to keep up. Businesses need flexible tools, like cloud-based systems, that can grow with their data needs.

5) Security and Privacy Risks: Keeping data safe and following rules like GDPR is very important. Weak security can lead to data leaks and heavy fines.

6) Lack of Data Governance: Without clear rules on managing data, companies can struggle with keeping data accurate, secure, and within legal boundaries.

The Future of Data Processing

Here are some important trends shaping the future of Data Processing:

1) AI-powered Automation: AI and machine learning are helping to speed up and simplify Data Processing. These tools can quickly find patterns and insights in large amounts of data with less need for manual work.

2) Growth of Edge Computing: Edge computing handles data closer to where it’s created, like on devices or sensors. This reduces delays and saves bandwidth, which is great for real-time apps and smart devices.

3) Data Mesh Approach: Data mesh allows different teams in a company to handle and use data independently, instead of relying on a central team. This makes it easier to scale and manage data as companies grow.

4) Real-time Data Use: Businesses want to make decisions faster, so real-time analytics is becoming more common. It lets companies process and act on data instantly, helping them stay ahead in a fast-changing market.

5) ML and AI Integration: Machine Learning and Artificial Intelligence are increasingly integrated with Data Processing technologies. This integration automates Data analysis, predictive modelling, and decision-making processes.

6) Privacy-preserving Data Processing: With increasing worries over Data Privacy and stricter regulations, technologies that support privacy-preserving Data Processing are becoming more important.

What are the Three Main Stages of Information Processing?

The classical information processing model includes three key stages. First is stimulus identification, where the brain recognises and interprets information. Next is response selection, where the appropriate action is chosen. Finally, response programming sends signals to perform the selected action through muscles or movement systems.

What is Data Processing in GDPR?

Under the General Data Protection Regulation (GDPR), Data Processing refers to any function performed on personal data. This includes collecting, recording, organising, storing, using, or deleting personal information. Organisations must process data lawfully and transparently to uphold individuals' rights and data security.

Conclusion

By learning What is Data Processing, its steps, types, and uses, you can see how it supports smart decisions in everyday work. From businesses to apps, it plays a big role in making things easier, quicker and more accurate. It’s a skill worth knowing in today’s data-driven world. For those preparing for a career in data entry, reviewing Data Entry Interview Questions can help you gain an in-depth understanding of this role and how Data Processing fits into the bigger picture.

Transform Data into Speed with our PySpark Training, Sign up now!

Frequently Asked Questions

What are Data Processing Tools?

Data processing tools are software or systems used to collect, process, and analyse data efficiently. Examples include Excel, SQL, Hadoop, and Python-based tools. These tools streamline workflows, handle large datasets, and ensure accuracy, enabling faster decision-making and improved Data Management.

What's the Role of Data Storage in Data Processing?

Data storage is crucial in Data Processing as it securely holds raw, intermediate, and processed data for analysis and retrieval. It ensures data availability, reliability, and organisation, enabling smooth processing and supporting decision-making by maintaining structured access to information.

What are the Other Resources and Offers Provided by The Knowledge Academy?

The Knowledge Academy takes global learning to new heights, offering over 3,000+ online courses across 490+ locations in 190+ countries. This expansive reach ensures accessibility and convenience for learners worldwide.

Alongside our diverse Online Course Catalogue, encompassing 19 major categories, we go the extra mile by providing a plethora of free educational Online Resources like Blogs, eBooks, Interview Questions and Videos. Tailoring learning experiences further, professionals can unlock greater value through a wide range of special discounts, seasonal deals, and Exclusive Offers.

What is The Knowledge Pass, and How Does it Work?

The Knowledge Academy’s Knowledge Pass, a prepaid voucher, adds another layer of flexibility, allowing course bookings over a 12-month period. Join us on a journey where education knows no bounds.

What are the Related Courses and Blogs Provided by The Knowledge Academy?

The Knowledge Academy offers various Data Science Courses, including Data Mining Training, Python Data Science Course, Advanced Data Science Certification and Data Science With R Training. These courses cater to different skill levels, providing comprehensive insights into Data Mining Tools.

Our Data, Analytics & AI Blogs cover a range of topics related to Data Processing, offering valuable resources, best practices, and industry insights. Whether you are a beginner or looking to advance your Data Processing skills, The Knowledge Academy's diverse courses and informative blogs have got you covered.

Lily Turner is a data science professional with over 10 years of experience in artificial intelligence, machine learning, and big data analytics. Her work bridges academic research and industry innovation, with a focus on solving real-world problems using data-driven approaches. Lily’s content empowers aspiring data scientists to build practical, scalable models using the latest tools and techniques.

View Detail

Upcoming Data, Analytics & AI Resources Batches & Dates

Date

Python Data Science Course

Python Data Science Course

Mon 17th Aug 2026

Mon 23rd Nov 2026

Top Rated Course

Top Rated Course

If you wish to make any changes to your course, please

If you wish to make any changes to your course, please