We may not have the course you’re looking for. If you enquire or give us a call on +358 942454206 and speak to our training experts, we may still be able to help with your training requirements.

We ensure quality, budget-alignment, and timely delivery by our expert instructors.

Raw data rarely arrives in a perfect, usable form. Errors, missing values, and inconsistencies can quietly distort insights. Without proper Data Cleaning, even well-designed analyses can lead to poor decisions.

This blog explains what Data Cleaning is and why it is essential for accurate analysis. It covers key concepts, common data quality issues, and practical techniques. You will also learn how clean data improves reliability, insights, and outcomes.

Table of Contents

1) What is Data Cleaning?

2) Why is Clean Data Important?

3) Characteristics of Clean Data

4) Data Cleaning Techniques

5) How to Clean Data?

6) Advantages of Data Cleaning

7) Data Cleaning Tools & Software

8) What is the Difference Between Data Cleaning and Data Transformation?

9) Real-world Examples of Data Cleaning

10) Conclusion

What is Data Cleaning?

Data Cleaning is the process of detecting and fixing false, damaged, ill-structured, duplicate, or missing data in a data set. It makes sure that the information that is analysed makes sense and is relevant.

When data is collected using more than one source, there is a high probability of errors and duplication that can lead to the destruction of results. Although different Data Cleaning processes depend on the type of data, it is necessary to have a systematic, repeatable method to ensure the reliability and accuracy of any analysis.

Why is Clean Data Important?

In today's business operations, decision-making is increasingly data-driven, with organisations leveraging data analytics to gain a competitive edge over their competitors. Consequently, maintaining clean data is essential for:

1) Data Science & Business Intelligence (BI) teams

2) Marketing managers

3) Business executives

4) Sales reps

5) Operational workers

That's particularly true in financial services retail, and other data-intensive industries, but it applies to organisations across the board, both large and small.

If data isn't properly cleaned, business data such as customer records may not be accurate, and analytics applications may deliver faulty information. This can lead to:

1) Flawed business decisions

2) Missed opportunities

3) Misguided strategies

4) Operational problems

5) Increased costs

6) Reduced revenue and profits

Characteristics of Clean Data

Various data characteristics are used to measure the cleanliness of data sets, including the following:

1) Accuracy

2) Completeness

3) Consistency

4) Integrity

5) Timeliness

6) Uniformity

7) Validity

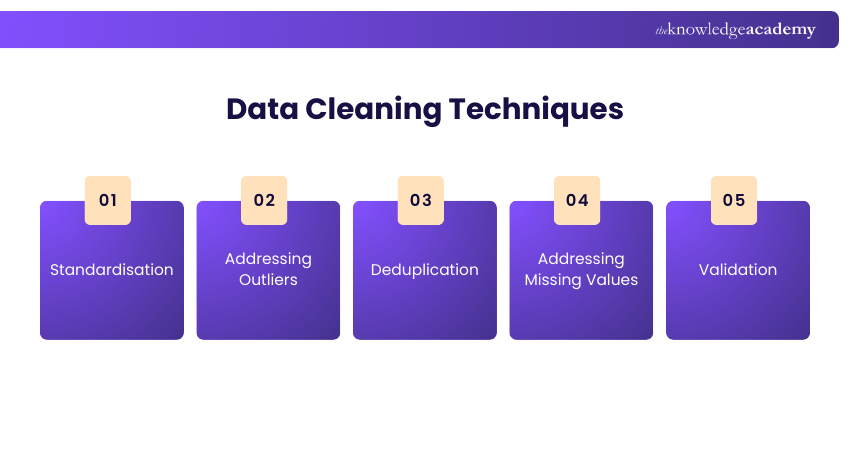

Data Cleaning Techniques

Successful Data Cleaning begins with a quality analysis of your data to determine quality problems. After recognising the level of problems, organisations apply various methods to make the data accurate as well as consistent and in an analytical form. The main techniques are:

1) Standardisation

The problem of inconsistencies in data format or representation is solved by standardisation. Indicatively, numbers such as MM-DD-YYYY and DD-MM-YYYY or dissimilar variations in address schemes are standardised. This provides consistency and thus makes data inter-system compatible and inter-system-compatible to analyse and integrate.

2) Addressing Outliers

Outliers are data that do not fit in with the rest, and they may misrepresent the analysis. Such can be caused by errors, unusual events, or true anomalies. Depending on its relevance to the data set and the analysis that is going to be done, the analysts consider keeping them, modifying them, or eliminating them.

3) Deduplication

Deduplication is used in the elimination of redundant records that have been created due to entry errors, system glitches, or integration problems. The elimination of duplicates will help to avoid both inflated datasets and inaccurate insights, and make the analyses based on genuine trends and patterns.

4) Addressing Missing Values

Missing values are a result of a lack of full data collection, mistakes in input, or system breakdown. The data professionals can use this to manage the gaps, either by estimating missing data, eliminating incompleteness, or marking them to be investigated later without compromising the accuracy and usefulness of the dataset.

5) Validation

Validation is the final check to confirm that data is clean, accurate, and ready for analysis or visualisation. This step involves manual inspection or automated tools to detect remaining errors, inconsistencies, or anomalies.

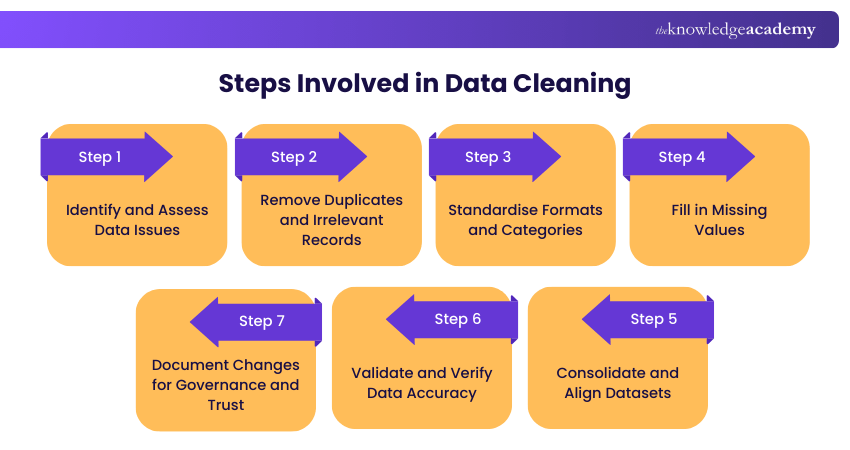

How to Clean Data?

Data Cleaning would not be a one-time exercise. New mistakes and dissimilarities arise as new information is constantly gathered. This is why efficient Data Cleaning is a systematic, repetitive procedure, which can be automated, scaled, and integrated into the broader data pipeline. The following are the steps involved in this process:

Step 1: Identify and Assess Data Issues

Begin your analysis by analysing the data to determine the areas of problems. These involve the identification of missing values, anomalies, redundant records, and inconsistent records. Although tools can highlight the possible problems, contextual judgment is needed to differentiate between serious errors and legitimate but outlier information.

Step 2: Remove Duplicates and Irrelevant Records

Repeated information may confuse measures and interfere with the analytical understanding. Use the same identity or some other similar attributes to eliminate or combine similar identities. This step is also best suited to the elimination of outdated or obsolete records that are not yet helpful in the analysis required.

Step 3: Standardise Formats and Categories

Consistency is essential when dealing with data from various sources. Control common date formats, unit of measurement, and categories by standardising them. In the absence of such an alignment, data integration is invalid, and the results of the report can be inferred.

Step 4: Fill in Missing Values

It can result in analysing incomplete data that leads to bias in the results. Missing values can be replaced with averages, interpolation, or forward-fill depending on the circumstances. In other scenarios, the best way to go is to leave gaps intact or not to touch records that are affected.

Step 5: Consolidate and Align Datasets

After single datasets have been cleaned, they are often combined. This could be the mapping of fields between the sources, the elimination of silos, or the loading of data to a central repository like a data warehouse or a data lake. Adequate alignment enhances accessibility and analytical effectiveness.

Step 6: Validate and Verify Data Accuracy

After cleaning, perform validation checks to ensure results make sense. Verify totals, confirm business rules are met, and compare outputs against trusted reference sources. This step helps prevent downstream errors in reporting and visualisation.

Step 7: Document Changes for Governance and Trust

Effective Data Cleaning must be traceable. Through the Document, script, or metadata record all transformations, assumptions, and decisions. Data governance is well documented and enhances team interactions and instills confidence in the credibility of the data.

Learn to use MS Excel for efficient data analysis with Data Analysis Training Using MS Excel – Join now!

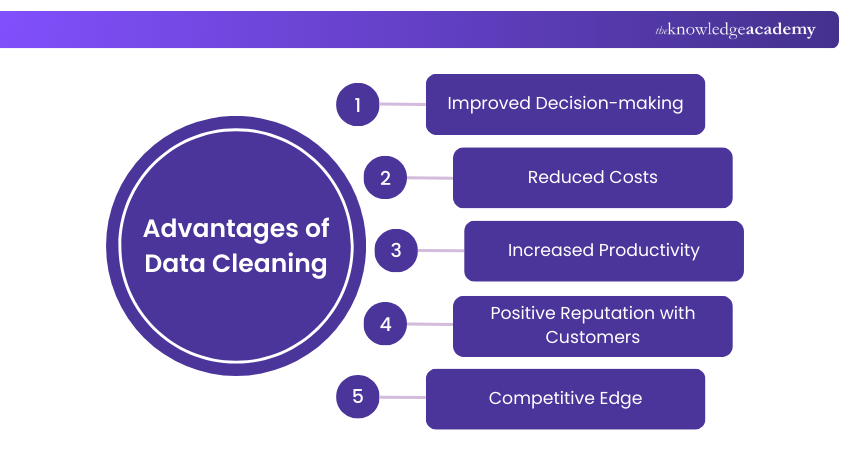

Advantages of Data Cleaning

Data Cleaning ensures that datasets are accurate, consistent, and reliable for analysis. Understanding its advantages highlights how clean data supports better decisions, efficiency, and business performance. The key advantages are:

1) Improved Decision-making

When information is clean, teams do not have to assume but use accurate and relevant information. It eliminates confusion that may arise due to incomplete or inconsistent datasets. Credible information infuses internal trust and confidence in reports. Consequently, teams will be able to concentrate on their strategy and invention rather than eliminating mistakes.

2) Reduced Costs

Proper Data Cleaning reduces the costs that are associated with wrong or untidy databases. Billing mistakes, reporting mistakes, or operational mistakes could result in losses. Clean data also assists organisations in efficient re-use of past data. This shows that cleaning of data becomes a long-term investment and not a one-time cost.

3) Increased Productivity

Data that has been properly maintained can be accessed, analysed, and shared among teams. Employees do not have to waste time locating or correcting data errors. In the long run, systematic Data Cleaning greatly helps in productivity.

4) Positive Reputation with Customers

When the customer information is accurate, there will be appropriate communication. It eliminates the chances of sending wrong or undesirable messages. Clear data facilitates individualised interaction and regular contacts. This assists in developing trust and enhancing long-term customer relationships.

5) Competitive Edge

Structured and clean data gives more hypotheses of customer behaviour and trends in the market. It enhances the targeting, personalisation, and marketing ROI. The organisations will be able to make decisions quickly and smartly compared to their competitors. Quality data will eventually lead to growth and differentiation of businesses.

Attain the expertise to extract meaningful data insights by signing up for our Data Science Courses now!

Data Cleaning Tools & Software

Data Cleaning tools are essential for ensuring data quality and accuracy in various fields, from business analytics to scientific research. These tools help streamline the process of identifying and rectifying errors, inconsistencies, and other data quality issues.

Here, here are the four categories of Data Cleaning tools, namely Microsoft Excel, programming languages, data visualisations, and proprietary software, explained as follows:

1) Microsoft Excel

Microsoft Excel is one of the most widely used tools for Data Cleaning and manipulation. Its user-friendly interface allows individuals without extensive programming skills to perform basic Data Cleaning tasks. While Excel is suitable for small to moderately-sized datasets, it may not be the best choice for large datasets or complex Data Cleaning tasks.

Excel offers various features that facilitate Data Cleaning, including:

a) Data Sorting and Filtering: Excel allows you to sort and filter data to identify duplicates and outliers.

b) Formula and Functions: Functions like IF, VLOOKUP, and CONCATENATE enable data transformation and validation.

c) Conditional Formatting: You can highlight data that meets specific criteria to spot inconsistencies quickly.

2) Programming Languages

Programming languages like Python, R Programming, and SQL are powerful tools for Data Cleaning, particularly when dealing with large and complex datasets. Programming languages are highly flexible and can handle diverse Data Cleaning tasks.

They are particularly useful when you need to automate repetitive Data Cleaning processes or work with large datasets. These languages provide extensive libraries and packages designed for data manipulation and cleaning:

a) Python: Libraries such as Pandas and NumPy offer robust Data Cleaning capabilities. Python is widely used for cleaning, transforming, and analysing data.

b) R: R's data manipulation packages, like dplyr and tidyr, are excellent for cleaning and reshaping data.

c) SQL: SQL can be used to query, filter, and aggregate data, making it valuable for Data Cleaning within databases.

3) Data Visualisations

Data visualisation tools, while primarily known for creating charts and graphs, can also aid in Data Cleaning by providing a visual representation of data. While these tools don't perform the actual Data Cleaning, they assist in the data quality assessment process by offering a visual perspective on your data.

Tools like Tableau, Power BI, and QlikView allow you to:

a) Spot Data Anomalies: Visualisations can help identify outliers and inconsistencies in data.

b) Explore Data Patterns: Patterns in data can be more apparent when visualised.

c) Data Validation: Dashboards can be designed to highlight data quality issues.

4) Proprietary Software

Several proprietary Data Cleaning software tools are specifically designed to automate and streamline Data Cleaning processes. Proprietary software is ideal for organisations that require dedicated Data Cleaning solutions and are willing to invest in specialised tools. They often offer user-friendly interfaces, making them accessible to a broader range of users.

These tools, such as Trifacta and OpenRefine, offer a range of features:

a) Automated Data Profiling: These tools automatically profile data to identify common data quality issues.

b) Data Transformation and Wrangling: They provide user-friendly interfaces for cleaning and transforming data.

c) Visualisation: Many proprietary tools offer Data Visualisation capabilities to assist in data quality assessment.

What is the Difference Between Data Cleaning and Data Transformation?

Data Cleaning removes errors, inconsistencies, and duplicates to ensure data accuracy and reliability. Data transformation restructures, formats, or converts data into a usable form for analysis. While cleaning improves data quality, transformation adapts it to specific processing or modelling needs.

Real-world Examples of Data Cleaning

Data Cleaning is an important factor in converting uncleaned data that is inconsistent into valuable information that is actionable. The following real-life situations depict how successful Data Cleaning can lead to quantifiable business effects in any business industry;

1) Retail - Inventory Accuracy: Unifying the product IDs and price information across the systems will enhance the accuracy of the forecasting and minimise stockout, as there will be no redundant and errors in records.

2) Healthcare - Regulatory Reporting: Removing duplicates and standardising medical codes allows healthcare to consolidate patient data and accurately report medical data, compliance, and better outcome tracking.

3) SaaS - Minimising Churn: Comparing user records and synchronising timestamps across systems generates reliable data, which allows churn to be identified earlier and customers to be held on prior to churn.

4) Finance - Fraud Detection: When transaction forms are normalised and irregularities eliminated, this enhances the accuracy of terrorism models and also minimises false positives.

5) Manufacturing - Supply Chain Efficiency: Standardisation of supplier and part data enhances procurement visibility, reduces lead times, and minimises costs of operation.

6) Marketing - Optimisation of Campaigns: verify and deduplicate customer records, enhance the segmentation, campaign response rate, and ROI, by running targeted campaigns.

Conclusion

Data Cleaning is a vital step in turning raw information into accurate, actionable insights. By identifying and correcting errors, standardising formats, and removing duplicates, organisations can improve decision-making and efficiency. Clean data builds trust, enhances productivity, and strengthens customer relationships. Ultimately, investing in clean data drives better outcomes and a competitive edge.

Learn to analyse data effectively for informed decisions making with Advanced Data Science Certification – Join now!

Frequently Asked Questions

How Does Data Cleaning Impact Machine Learning Models?

It is due to Data Cleaning that machine learning models are trained on correct, consistent, and complete datasets. Elimination of errors, duplicates, and irrelevant information enhances model reliability and predictive capability.

What Happens if Data is Not Cleaned?

If data is not cleaned, it can lead to inaccurate analysis, misleading insights, and poor decision-making. Errors, duplicates, and inconsistencies may cause inefficiencies, impact business operations, and reduce the effectiveness of machine learning models and analytics.

What are the Other Resources and Offers Provided by The Knowledge Academy?

The Knowledge Academy takes global learning to new heights, offering over 3,000+ online courses across 490+ locations in 190+ countries. This expansive reach ensures accessibility and convenience for learners worldwide.

Alongside our diverse Online Course Catalogue, encompassing 17 major categories, we go the extra mile by providing a plethora of free educational Online Resources like Blogs, eBooks, Interview Questions and Videos. Tailoring learning experiences further, professionals can unlock greater value through a wide range of special discounts, seasonal deals, and Exclusive Offers.

What is The Knowledge Pass, and How Does it Work?

The Knowledge Academy’s Knowledge Pass, a prepaid voucher, adds another layer of flexibility, allowing course bookings over a 12-month period. Join us on a journey where education knows no bounds.

What are the Related Courses and Blogs Provided by The Knowledge Academy?

The Knowledge Academy offers various Data Science Courses, including the Python Data Science Course and the Predictive Analytics Course. These courses cater to different skill levels, providing comprehensive insights into What is Data Science.

Our Data, Analytics & AI Blogs cover a range of topics related to Data Cleaning, offering valuable resources, best practices, and industry insights. Whether you are a beginner or looking to advance your Data Science skills, The Knowledge Academy's diverse courses and informative blogs have got you covered.

Lily Turner is a data science professional with over 10 years of experience in artificial intelligence, machine learning, and big data analytics. Her work bridges academic research and industry innovation, with a focus on solving real-world problems using data-driven approaches. Lily’s content empowers aspiring data scientists to build practical, scalable models using the latest tools and techniques.

View Detail

Upcoming Data, Analytics & AI Resources Batches & Dates

Date

Python Data Science Course

Python Data Science Course

Mon 17th Aug 2026

Mon 23rd Nov 2026

Top Rated Course

Top Rated Course

If you wish to make any changes to your course, please

If you wish to make any changes to your course, please